OCI vs AWS vs Azure - The Best Azure Alternative (2026)

Compare Oracle Cloud, AWS, and Azure on pricing, compute, storage, and free tier. Includes real cost examples and free tier comparison. Updated March 2026.

This analysis reveals where OCI delivers 40-70% savings on GPUs, storage, and networking while identifying scenarios where AWS or Azure provide better value for specific workload patterns.

Cloud costs accumulate rapidly at production scale. A single A100 GPU runs continuously for $2,000-3,000 monthly.

Scale to 10 GPUs and monthly spend reaches $20,000-30,000. This comparison uses identical GPU types, US East regions, and on-demand pricing.

TLDR;

- OCI H100 instances cost 58% less than AWS and 57% less than Azure per GPU-hour.

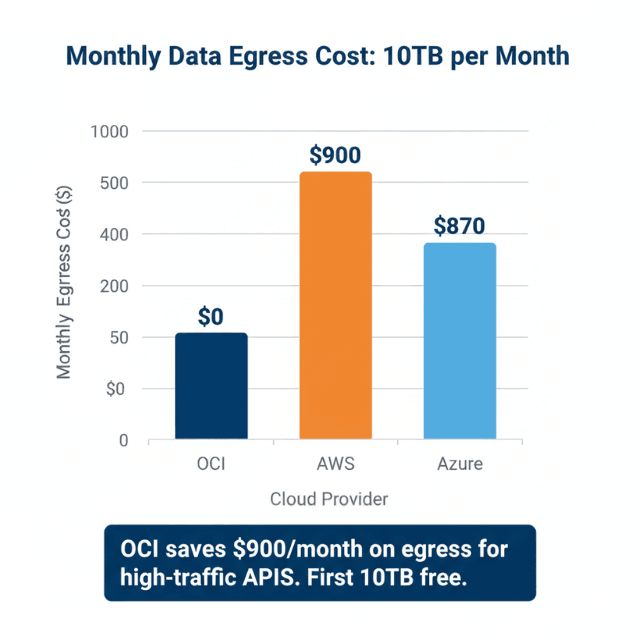

- 10TB free monthly egress versus $0.09/GB on AWS saves $900/month for high-traffic APIs.

- AWS wins for A10-class GPUs at 33% lower cost than OCI.

- Large 8-GPU deployments on OCI run $18,337/month versus $29,533 on AWS.

Why Cloud Cost Matters for LLM Deployments

LLM inference costs accumulate fast at production scale. A single A100 GPU runs 24/7 for $2,000-3,000 monthly.

Scale to 10 GPUs and you're spending $20,000-30,000 per month — over 12 months that's $240,000-360,000 just for compute. A 20% cost advantage saves $48,000 annually on a 10-GPU deployment.

Cloud providers use different pricing strategies: AWS optimizes for flexibility with per-second billing, Azure bundles services with enterprise agreements, and Oracle focuses on price-performance leadership for compute-intensive workloads.

GPU Instance Pricing Comparison

A10 GPU Instances (24GB VRAM):

| Provider | Instance | $/hr | $/mo |

|---|---|---|---|

| OCI | VM.GPU.A10.1 |

$1.50 | $1,095 |

| AWS | g5.xlarge |

$1.006 | $734 |

Winner: AWS — 33% cheaper than OCI for A10-class GPUs, though AWS bundles less CPU/RAM.

A100 GPU Instances (40GB VRAM):

| Provider | Instance | $/hr | $/mo | Note |

|---|---|---|---|---|

| OCI | VM.GPU.A100.1 |

$2.95 | $2,154 | Single GPU |

| AWS | p4d.24xlarge |

$4.10/gpu | $32.77 total | 8x A100 minimum |

| Azure | NC24ads_A100_v4 |

$3.673 | $2,681 | 80GB VRAM |

Winner: OCI — 20% cheaper than Azure, 28% cheaper per-GPU than AWS for single A100 instances. Note: AWS requires renting 8x A100 minimum.

H100 GPU Instances (80GB VRAM):

| Provider | Instance | $/hr (per GPU) | $/mo (total) |

|---|---|---|---|

| OCI | BM.GPU.H100.8 |

$5.12 | $29,901 |

| AWS | p5.48xlarge |

$12.29 | $98.32/hr |

| Azure | ND96isr_H100_v5 |

$11.93 | $95.40/hr |

Winner: OCI — 58% cheaper than AWS, 57% cheaper than Azure for H100.

See Those H100 Savings? Let's Make Them Real.

You just saw OCI delivers **58% lower H100 costs** than AWS – that's $50,000+ annually per 8-GPU node. But migrating workloads requires expertise in:

- GPU cluster provisioning – Bare metal H100s with InfiniBand networking

- Model optimization – Quantization and vLLM tuning for OCI's architecture

- Cost governance – Reserved capacity + spot blending for maximum savings

Free 30-min consultation: We'll analyze your current cloud bill and show exact OCI savings.

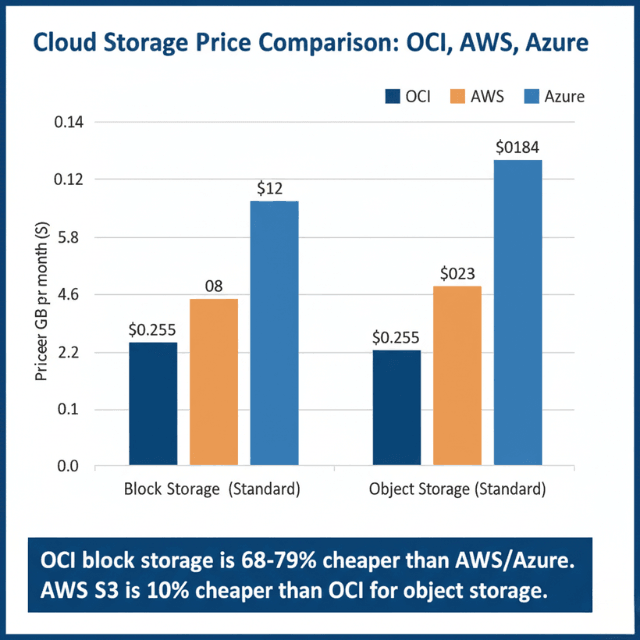

Storage Cost Comparison

Block Storage: OCI Block Volume Standard: $0.0255/GB ($25.50/TB). AWS EBS gp3: $0.08/GB ($80/TB). Azure Premium SSD: $0.12/GB.

Winner: OCI — 68% cheaper than AWS gp3, 79% cheaper than Azure Premium SSD.

Object Storage: OCI Standard: $0.0255/GB. AWS S3: $0.023/GB. Azure Blob Hot: $0.0184/GB.

Winner: AWS S3 — 10% cheaper than OCI for standard storage.

Network and Data Transfer Costs

Data Egress: OCI offers the first 10TB/month free, then $0.0085/GB. AWS provides only 100GB free, then $0.09/GB for the next 10TB. Azure matches AWS at $0.087/GB.

Example — 100M tokens/day at 1 byte/token = 3TB/month egress: OCI costs $0 (under free tier). AWS costs $265. Azure costs $257.

Winner: OCI — 10TB free egress eliminates data transfer costs that reach $900/month on competing clouds.

OCI Price List for Compute, Storage and Network

Oracle Cloud's pricing is publicly listed with no hidden fees. Here's a consolidated reference for the most common GPU, storage, and network services.

| Category | Service | Price | Notes |

|---|---|---|---|

| Compute | VM.GPU.A10.1 (24GB) | $1.50/hr | 1x A10 GPU |

| Compute | VM.GPU.A100.1 (40GB) | $2.95/hr | 1x A100 GPU |

| Compute | BM.GPU.H100.8 (80GB) | $5.12/hr per GPU | 8x H100, bare metal |

| Compute | VM.Standard.E4.Flex | ~$0.17/hr | 4 OCPUs, 16GB RAM |

| Storage | Block Volume (Standard) | $0.0255/GB/mo | vs AWS EBS $0.08/GB |

| Storage | Object Storage (Standard) | $0.0255/GB/mo | vs AWS S3 $0.023/GB |

| Network | Egress (first 10TB/mo) | Free | AWS charges $0.09/GB |

| Network | Egress (beyond 10TB) | $0.0085/GB | 90% cheaper than AWS |

| Network | Load Balancer | $0.01/hr | vs AWS ALB $0.0225/hr |

Total Cost of Ownership Examples

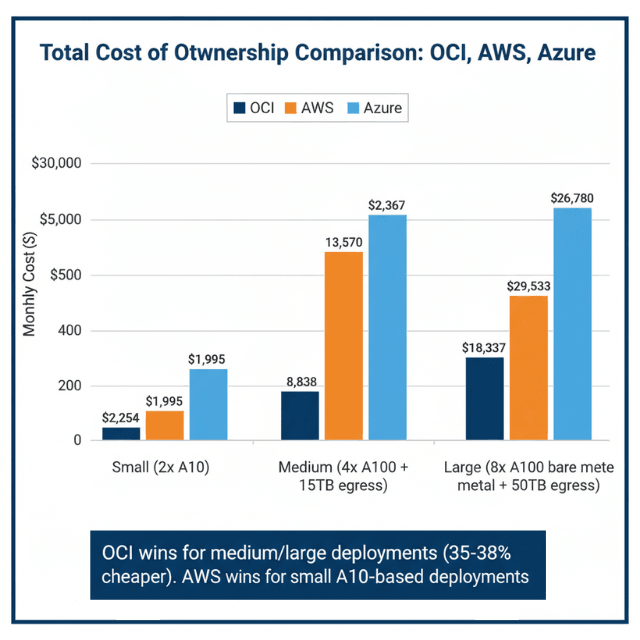

Small Production (Llama 2 7B, 2x A10): OCI $2,254/month. AWS $1,995/month. Azure $4,992/month.

Winner: AWS — 13% cheaper than OCI, 60% cheaper than Azure for A10 workloads.

Medium Production (Llama 2 70B, 4x A100, 15TB egress): OCI $8,838/month. AWS $13,570/month. Azure $12,367/month.

Winner: OCI — 35% cheaper than AWS, 29% cheaper than Azure.

Large Production (8x A100 bare metal, 50TB egress): OCI $18,337/month. AWS $29,533/month. Azure $26,780/month.

Winner: OCI — 38% cheaper than AWS, 32% cheaper than Azure.

When to Choose Each Cloud Provider

Choose Oracle Cloud when: running 4+ A100/H100 GPU deployments, high data egress requirements (>10TB/month), need maximum price-performance for compute, or using Oracle Database and OCI services. For implementation details, see our guides on running LLMs on Oracle Cloud and connecting LLMs to Oracle Cloud databases.

Choose AWS when: needing single A10 GPU instances, wanting per-second billing flexibility, using AWS ecosystem (SageMaker, Bedrock, S3), or requiring global presence across 25+ regions. Reserved instances provide 40-60% additional savings.

Choose Azure when: integrated with Microsoft enterprise agreements, using Azure AI services or Azure ML, or requiring hybrid cloud with on-premise integration.

Top Cloud Alternatives to Azure in 2026

If Azure's pricing or ecosystem doesn't fit your workload, here's where OCI and AWS specifically outperform it:

| Azure Service | Best Alternative | Cost Advantage |

|---|---|---|

| Azure VM (compute) | OCI VM.Standard.E4.Flex | 30-50% cheaper per vCPU |

| Azure H100 GPU VM | OCI BM.GPU.H100.8 | 57% cheaper per GPU-hour |

| Azure Premium SSD | OCI Block Volume Standard | 79% cheaper per GB |

| Azure Blob Storage (Hot) | AWS S3 Standard | 28% cheaper per GB |

| Azure Egress | OCI (first 10TB free) | $870+/month savings on 10TB |

| Azure SQL Database | OCI Autonomous DB (free tier) | 2x 20GB free, permanent |

For AI workloads specifically, OCI's always-free A1 tier makes it the most compelling Azure alternative — see our guide on running LLMs on OCI's always-free tier for a step-by-step setup with 7B to 13B models at zero cost.

Hidden Costs to Consider

Support: OCI free basic, $500+/month production. AWS 10% of spend for Business. Azure 10%+ for Professional Direct.

Cross-region transfer: OCI $0.01-0.02/GB, AWS $0.02/GB, Azure $0.02/GB.

Load balancers: OCI $0.01/hr, AWS ALB $0.0225/hr + LCU charges, Azure $0.025/hr.

Cost Optimization Strategies

Commit 1-3 years for 40-60% savings: AWS 40% (1-year) / 60% (3-year). Azure 35% / 55%. OCI 20% (monthly) / 40% (annual). Leverage OCI's 10TB free egress to eliminate costs that reach $900/month on AWS.

Monitor GPU utilization — target 70-85% and right-size when utilization stays below 60%. Use lifecycle policies to archive old models to cheaper storage tiers.

Oracle Cloud Free Tier vs AWS Free Tier vs Azure Free Tier

| Feature | OCI Always Free | AWS Free Tier | Azure Free Tier |

|---|---|---|---|

| Compute | 4 OCPUs + 24GB RAM (A1 Flex, permanent) | 750 hrs t2.micro (12 months) | 750 hrs B1S (12 months) |

| Storage | 200GB block, 20GB object (permanent) | 30GB EBS (12 months) | 64GB managed disk (12 months) |

| Database | 2x Autonomous DB 20GB (permanent) | RDS 750hrs micro (12 months) | SQL DB 250GB (12 months) |

| Duration | Permanent (no expiry) | 12 months | 12 months |

OCI vs AWS Pricing Calculator Comparison

For a standard 3-tier web application (2 app servers, 1 DB, 1TB storage, 10TB egress/month):

| Component | OCI ($/month) | AWS ($/month) | Azure ($/month) |

|---|---|---|---|

| Compute (2x 4vCPU/16GB) | $120 | $280 | $260 |

| Storage (1TB block) | $51 | $100 | $95 |

| Egress (10TB) | $0 (free) | $920 | $870 |

| Total | $171 | $1,300 | $1,225 |

Conclusion

Oracle Cloud Infrastructure delivers 20-58% cost savings on A100 and H100 GPU instances compared to AWS and Azure, making it optimal for large production deployments with 4+ GPUs.

OCI's 10TB free monthly egress eliminates data transfer costs that reach $900 monthly on competing clouds. AWS provides best value for A10-based small deployments and offers unmatched ecosystem integration.

Azure fits Microsoft-centric organizations with enterprise agreements. Choose your platform based on specific workload characteristics and ecosystem requirements rather than instance prices alone.

For the complete Oracle Cloud LLM deployment strategy, including GPU selection, architecture patterns, and GDPR compliance, see our Oracle Cloud LLM deployment guide.

Frequently Asked Questions

Should I use spot instances or preemptible VMs for LLM inference?

Avoid spot/preemptible instances for production LLM inference. While they offer 60-90% discounts, they can be terminated with little notice, disrupting active requests.

Spot instances work for batch processing, model training, or development. For production inference, use on-demand or reserved instances. If cost is critical, consider reserved instances (40-60% savings) instead.

How do cloud costs compare to on-premise deployment?

On-premise becomes cost-effective at scale with stable workloads. An A100 GPU costs $10,000-15,000 — cloud costs $2,000-3,000/month, so breakeven occurs at 3-7 months.

However, on-premise requires upfront capital, data center space, power, cooling, and IT staff. Choose on-premise if you operate 10+ GPUs 24/7 with existing data center infrastructure. Choose cloud for variable workloads or if you lack capital for upfront hardware investment.

Can I mix cloud providers for better pricing?

Yes, multi-cloud strategies can optimize costs — deploy latency-sensitive inference on OCI, use AWS S3 for cost-effective model storage, leverage Azure for Microsoft enterprise integration.

However, inter-cloud data transfer costs $0.02-0.05/GB and adds management complexity. The operational overhead typically only justifies multi-cloud for large organizations (100+ GPUs). For most teams, optimize within a single cloud first before adding multi-cloud complexity.

Summarize this post with:

Ready to put this into production?

Our engineers have deployed these architectures across 100+ client engagements — from AWS migrations to Kubernetes clusters to AI infrastructure. We turn complex cloud challenges into measurable outcomes.