Slash ML Data Costs by 70% with Smart Storage

Reduce ML data preparation and storage costs up to 70% using columnar formats, spot instances, intelligent tiering, and lifecycle policies for cost-effective machine learning operations.

Introduction

Data preparation and storage consume 60-80% of machine learning project budgets, far exceeding the cost of model training and deployment. For ML teams, this represents both a challenge and an opportunity. Strategic optimization of data storage architecture and processing workflows can reduce these costs by 70-90% without sacrificing data quality or accessibility.

The primary cost drivers include compute resources for data processing, storage for datasets and intermediate results, data transfer fees across services and regions, and monitoring and management overhead. Modern cloud services offer powerful optimization tools including spot instances for 90% compute savings, columnar formats like Parquet for 70% storage reduction, S3 Intelligent-Tiering for automatic cost optimization, and VPC endpoints to eliminate transfer charges. This guide explores proven strategies for managing ML data costs across the entire data lifecycle.

Understanding ML Data Cost Drivers

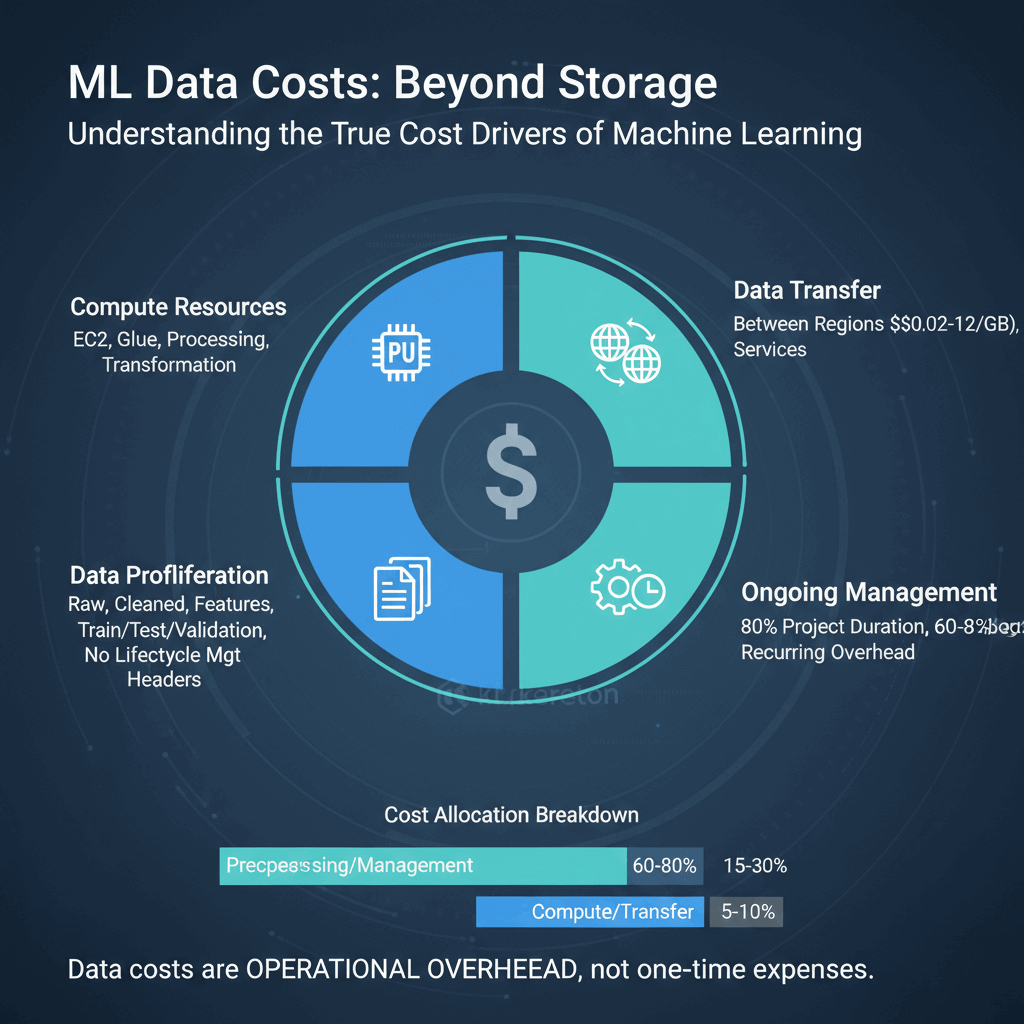

ML data costs encompass far more than simple storage fees. Compute resources for data processing generate substantial expenses through EC2 instances, AWS Glue jobs, and transformation operations. Data transfer costs accumulate when moving data between regions at $0.02-$0.12 per GB or across services without proper network configuration.

Storage costs compound through data proliferation. ML teams create multiple dataset versions including raw data, cleaned data, feature-engineered data, and separate training, validation, and test sets. Without lifecycle management, these variants accumulate indefinitely, driving storage expenses upward month after month.

Research shows data preprocessing accounts for 80% of ML project duration and 60-80% of total budgets. Organizations allocate 25-75% of initial ML solution budgets to ongoing data management. These surprising statistics reveal that data costs represent recurring operational overhead rather than one-time expenses. For a deeper dive into ML workflow optimization, see our guide on reducing cloud computing costs.

File Format Optimization

File format selection dramatically impacts both storage costs and processing efficiency. Traditional row-based formats like CSV and JSON prove inefficient for analytical workloads, while columnar formats like Apache Parquet and ORC optimize both storage and query performance.

Columnar formats reorganize data by column rather than row, enabling superior compression ratios of 5-10x compared to row-based formats. Real-world migrations from CSV to Parquet commonly achieve 70-80% storage reduction. Storing similar values together allows compression algorithms to identify patterns more effectively than when compressing mixed-type row data.

👉 Learn more cost‑saving strategies in “Master AWS Cost Optimization for Startups”

Beyond storage savings, columnar formats reduce processing costs through selective column reading. When queries need specific columns, Parquet reads only those columns rather than scanning entire records. For datasets with 50 columns where queries typically access 5-10 columns, this reduces I/O by 80-90%, cutting both processing time and compute costs.

Parquet has emerged as the standard for cloud-based ML workflows. AWS Athena, AWS Glue, Apache Spark, and most modern analytics engines optimize specifically for Parquet. Configure large files over 100 MB to reduce metadata overhead, use snappy or gzip compression based on performance requirements, and enable dictionary encoding for low-cardinality columns.

Spot Instances for Batch Processing

Spot instances represent the single most impactful cost optimization for data preparation workloads, offering up to 90% savings compared to on-demand pricing. AWS EC2 Spot Instances provide access to unused compute capacity at dramatically reduced rates, typically 70-90% cheaper than equivalent on-demand instances.

AWS can reclaim spot instances with two minutes notice when capacity is needed for on-demand customers. This interruption risk makes spot instances ideal for batch data processing that can tolerate interruptions and resume from checkpoints, while unsuitable for latency-sensitive workloads.

Implementing spot instances successfully requires fault-tolerant pipelines with regular checkpointing. Modern ETL frameworks like Apache Spark and AWS Glue support checkpoint-based execution, allowing jobs to resume from the last successful checkpoint rather than restarting entirely. Use spot fleet configurations requesting multiple instance types across availability zones to reduce interruption risk.

For data pipelines processing hundreds of gigabytes or terabytes daily, spot instances can reduce monthly compute costs from over $10,000 to under $1,500. This transformation enables sustainable ML operations within budget constraints.

S3 Storage Tier Strategies

S3 Intelligent-Tiering leverages machine learning to automatically move data between access tiers based on usage patterns, optimizing costs without manual intervention or retrieval fees. The service monitors object access and automatically transitions between five tiers: Frequent Access, Infrequent Access (40% savings after 30 days), Archive Instant Access (68% savings after 90 days), Archive Access (71% savings), and Deep Archive Access (95% savings).

Intelligent-Tiering charges $0.0025 per 1,000 objects monthly for monitoring. This proves economical for datasets with millions of large files and uncertain access patterns, but potentially expensive for billions of small objects. Objects smaller than 128 KB aren't eligible for automatic tiering.

For datasets with predictable access patterns, S3 lifecycle policies provide automated tier transitions without monitoring fees. Design rules matching data categories: transition completed experiments to infrequent access after 30 days, archive to Glacier after 90 days, and delete experimental artifacts after defined retention periods.

AWS reports customers have collectively saved $2 billion through S3 Intelligent-Tiering. Analyze your specific access patterns and object size distribution before migrating entire datasets to determine optimal tiering strategy. For further guidance on AWS cost saving tools, see AWS official cost optimization documentation.

Data Transfer Cost Reduction

VPC endpoints for S3 eliminate data transfer charges between EC2 instances and S3 within the same region. Without VPC endpoints, data flows through the public internet gateway, incurring transfer fees. With VPC endpoints, traffic remains within AWS's network backbone at no additional charge.

Processing terabytes of training data can generate hundreds of gigabytes or terabytes of data transfer daily. At $0.01-$0.02 per GB, these transfers cost hundreds or thousands monthly. VPC endpoints eliminate this expense entirely while reducing latency and improving security.

Cross-region data transfers cost significantly more than same-region operations, with $0.02 per GB for transfers between US regions and $0.08-$0.12 per GB for intercontinental transfers. Design ML workflows to minimize cross-region data movement by colocating data storage, processing, and training within a single region whenever possible.

Conclusion

Managing ML data preparation costs requires systematic attention to storage architecture, compute resource optimization, and pipeline efficiency. The strategies outlined in this guide enable 70-90% cost reduction while maintaining data quality and accessibility. Implementing spot instances for batch workloads, migrating to columnar formats like Parquet, deploying S3 Intelligent-Tiering, and using VPC endpoints tackle primary expenses directly. Cost optimization isn't a one-time project but an ongoing discipline. Establish regular cost reviews, implement robust monitoring and alerting, and cultivate cost awareness that drives architectural decisions alongside performance and functionality.

Frequently Asked Questions

How much can I realistically save by optimizing ML data preparation costs?

Most organizations achieve 60-80% cost reduction through optimization. Even partial implementation of strategies like spot instances (90% compute savings), Parquet conversion (70% storage savings), and S3 Intelligent-Tiering (40-95% tiered storage savings) typically yields over 50% total cost reduction within the first quarter.

Should I use S3 Intelligent-Tiering or manual lifecycle policies?

S3 Intelligent-Tiering works best for datasets with unpredictable access patterns, automatically optimizing without retrieval fees. Manual lifecycle policies suit datasets with predictable patterns and avoid monitoring fees. For most ML workloads, a hybrid approach works well: Intelligent-Tiering for active project data, manual policies for completed experiments with known retention requirements.

Are spot instances reliable enough for production data pipelines?

Yes, with proper architecture. Design fault-tolerant pipelines with checkpointing, use spot fleet configurations requesting multiple instance types, and implement hybrid architectures with small on-demand baselines for coordination. These practices make spot instances highly reliable for batch data preparation while delivering 85-90% cost savings.