Multi-Cloud Cost Optimization for Startups

Reduce multi-cloud costs for startups with proven strategies for AWS, Azure, and GCP. Learn workload placement, data transfer optimization, and FinOps best practices.

Introduction

Multi-cloud architectures offer resilience and best-of-breed services, but 85% of enterprises using three or more cloud providers struggle with escalating costs. Startups face unique challenges: data transfer fees between clouds, redundant services, fragmented billing, and limited visibility. Without strategic management, multi-cloud costs can exceed single-cloud deployments by 15-30%.

Effective optimization can reduce multi-cloud spending by 30-40% while maintaining operational agility. This guide provides proven strategies for workload placement, data transfer optimization, and FinOps implementation that help startups auto-scaling strategies across AWS, Azure, and GCP.

Understanding Multi-Cloud Cost Drivers

Data transfer costs represent the most insidious expense in multi-cloud environments. Cloud providers charge premium rates for data leaving their networks. Transferring 10TB from AWS to Azure costs $920 in egress fees alone. Cross-cloud API calls between microservices generate thousands of dollars monthly for high-volume applications.

Redundant resources emerge from decentralized decision-making. When different teams independently provision similar capabilities across clouds, organizations pay multiple times for equivalent functionality. A startup might maintain development environments in AWS, staging in GCP, and production in Azure—each with similar configurations but utilization rates below 30%.

Management complexity introduces direct costs through multiple monitoring tools and indirect costs from engineering time maintaining expertise across platforms. Each cloud provider requires separate interfaces, APIs, and operational paradigms. Tool fragmentation creates a proliferation of dashboards that reduces efficiency and increases likelihood of missing cost anomalies.

Idle resources accumulate rapidly in multi-cloud environments. Unattached storage volumes, orphaned snapshots, unused load balancers, and forgotten test instances account for 20-30% of total spending in poorly managed deployments. Lack of visibility across clouds makes these zombie assets particularly difficult to identify.

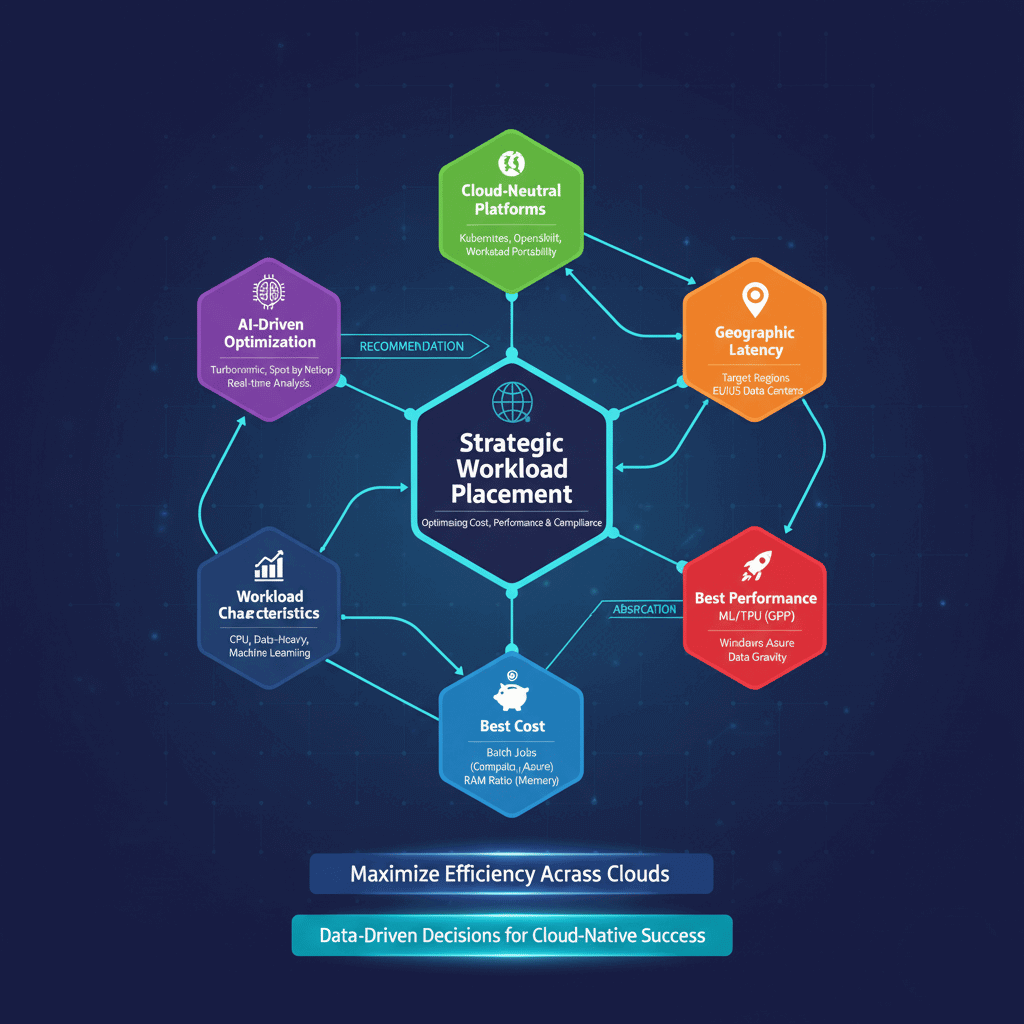

Strategic Workload Placement

Deploy each workload on the cloud provider offering the best combination of cost, performance, and compliance for that specific use case. AI-driven platforms like IBM Turbonomic and Spot by NetApp analyze real-time pricing and performance characteristics to recommend optimal placement.

Cloud-neutral Kubernetes platforms such as Red Hat OpenShift and Rancher provide the abstraction layer for workload portability. By containerizing applications and using Kubernetes as orchestration, startups can deploy the same workload across clouds without code changes.

Workload characteristics should drive placement decisions. CPU-intensive batch jobs favor clouds offering the best compute pricing, while memory-intensive applications prefer providers with favorable RAM-to-CPU ratios. Data-heavy workloads should run in the same cloud where data resides to minimize transfer costs. Machine learning workloads often perform best on GCP due to specialized TPU hardware, while Windows-based applications may be more cost-effective on Azure.

Geographic considerations influence optimal placement. Latency-sensitive applications should run in clouds with strong presence in target regions. A European startup might choose AWS for US operations but Azure for EU workloads to leverage local data centers.

Optimizing Data Transfer Costs

Minimize unnecessary data movement through intelligent architecture design. Microservices that frequently communicate should be colocated in the same cloud and region to avoid cross-cloud and cross-region transfer fees.

Cross-cloud CDNs like Cloudflare and Akamai provide cost-effective alternatives to native cloud CDN services while reducing egress charges. These CDNs cache content at edge locations worldwide, serving requests from nearby points of presence rather than origin servers. This approach reduces egress costs by 60-80% for content-heavy applications.

Data lakes with unified storage capabilities, such as Snowflake and Google BigQuery Omni, enable querying data across multiple clouds without duplication. Instead of copying datasets between providers for analysis, these platforms provide federated query capabilities that access data in place.

Direct connection services establish private network links between cloud providers. AWS Direct Connect combined with Azure ExpressRoute creates dedicated bandwidth between clouds at predictable costs compared to internet egress. For workloads requiring substantial inter-cloud communication, these connections typically achieve ROI within 2-3 months.

Data compression and deduplication before transfer yield significant savings. Modern compression algorithms reduce data volume by 40-70%, directly reducing transfer costs. Implementing compression at the application layer ensures efficiency regardless of provider.

Right-Sizing Resources Across Clouds

Autoscaling policies spanning multiple clouds enable dynamic resource allocation based on demand. Cloud-native autoscaling services like AWS Auto Scaling, Azure Autoscale, and GCP Autoscaler automatically adjust compute capacity. Properly configured autoscaling reduces compute costs by 30-50% by eliminating idle capacity during low-demand periods.

Scheduled scaling addresses predictable usage patterns. Development and testing environments rarely need full production capacity outside business hours. Implementing automated schedules that scale down or shut down these environments during nights and weekends typically reduces associated costs by 60-75%.

Instance type optimization ensures workloads run on appropriately sized virtual machines. Cloud providers offer dozens of instance families optimized for different use cases. Regularly analyzing workload characteristics and migrating to more suitable instance types can reduce costs by 20-40% while potentially improving performance.

Spot instances, preemptible VMs, and similar interruptible compute options provide 60-90% discounts compared to on-demand pricing. While these instances can be terminated with little notice, they're ideal for fault-tolerant workloads like batch processing, CI/CD pipelines, and stateless web applications.

Container right-sizing in Kubernetes environments requires particular attention. Setting appropriate CPU and memory requests and limits prevents over-allocation of cluster resources. Tools like Vertical Pod Autoscaler and Goldilocks help determine optimal configurations, typically reducing container infrastructure costs by 25-35%.

Centralizing Cost Visibility and Monitoring

Unified cost management platforms like VMware Tanzu CloudHealth, Apptio Cloudability, and Flexera Cloud Cost Optimization aggregate billing data from AWS, Azure, GCP, and smaller providers into single dashboards. These platforms normalize pricing across providers, enabling apples-to-apples comparisons and identifying cost inefficiencies that span clouds.

AI-powered anomaly detection identifies unusual spending patterns that may indicate misconfiguration, security breaches, or operational issues. Services like Azure Cost Management Anomaly Detection and AWS Cost Anomaly Detection use machine learning to flag unusual patterns. Early detection of cost spikes can prevent bill shock and save thousands of dollars.

Tag standardization across clouds enables granular cost attribution. Implementing consistent tagging schemas for project, environment, team, cost center, and application allows precise allocation of expenses. While each provider has unique tagging systems, establishing cross-cloud standards ensures comparability.

Real-time monitoring and cost visibility moves beyond monthly invoice reviews to provide immediate feedback on spending trends. Dashboards that update hourly or daily enable rapid response to cost anomalies before they impact budgets significantly. Setting up alerts for unusual patterns creates early warning systems that prevent budget overruns.

Implementing FinOps for Multi-Cloud

FinOps brings engineering, finance, and operations teams together to make data-driven spending decisions. The FinOps Foundation defines three phases: Inform (visibility and allocation), Optimize (reduce waste), and Operate (continuous governance).

Establish cost ownership by assigning monthly budgets to engineering teams. Teams view their cloud costs in real-time dashboards, with cost metrics included in sprint reviews. Cost optimization becomes technical debt, prioritized in backlogs.

Include cost analysis in architecture review processes. Estimate costs of proposed changes before implementation, compare architectural alternatives by cost, and model expenses at 10x and 100x scale. Document cost considerations in design documents.

Track cost efficiency metrics like cost per API request, cost per transaction, cost per active user, and month-over-month cost trends. Display cost metrics alongside performance metrics in engineering dashboards, making cost as visible as latency and error rates.

Celebrate cost optimization wins. Recognize and reward teams that reduce costs while maintaining performance. Include cost efficiency in performance reviews and share optimization case studies across organizations.

Advanced Cost-Saving Tools

AI-driven optimization platforms represent cutting-edge cost management technology. ProsperOps offers autonomous Reserved Instance and Savings Plan management for AWS and Azure, using machine learning to continuously optimize commitment portfolios. Users typically achieve 30-50% discounts on compute spend through optimized commitments.

Cast AI specializes in real-time Kubernetes cost optimization across AWS, GCP, and Azure. The platform automatically right-sizes nodes, selects optimal instance types, leverages spot instances intelligently, and scales clusters based on actual workload requirements. For Kubernetes-heavy startups, Cast AI delivers 50-70% reduction in cluster costs.

Infrastructure-as-Code platforms like HashiCorp Terraform Cloud embed cost awareness into provisioning processes. By integrating cost estimation into pull request workflows, teams see financial impact of infrastructure changes before deployment. This shift-left approach prevents expensive mistakes from reaching production.

Managed open-source platforms such as Aiven provide cloud-neutral data services that run on any major cloud provider. This approach eliminates vendor lock-in, enables workload portability, and often costs 20-40% less than equivalent managed services from cloud providers.

Conclusion

Multi-cloud cost optimization for startups requires systematic attention to workload placement, data transfer minimization, resource right-sizing, and centralized visibility. Start with high-impact wins like eliminating idle resources, implementing basic tagging, and establishing cost monitoring dashboards. As capabilities mature, progress to sophisticated approaches like automated right-sizing, commitment optimization, and AI-driven workload placement. The most successful startups prioritize cost observability, embrace automation to eliminate manual toil, and build cost awareness into organizational culture through FinOps practices. Multi-cloud environments will continue evolving with new services and pricing models—regular quarterly reviews ensure your approach remains current and effective, transforming multi-cloud from a cost center into a competitive advantage.

Frequently Asked Questions

How can startups reduce multi-cloud costs without sacrificing performance?

Startups can reduce multi-cloud costs while maintaining or improving performance by implementing strategic workload placement based on performance characteristics rather than just cost. Use performance benchmarking to identify which cloud providers offer the best price-to-performance ratio for specific workload types. Leverage autoscaling policies that maintain performance during peak loads while reducing capacity during quiet periods. Implement caching layers and CDNs to reduce both costs and latency for end users. The key is measuring cost per transaction or cost per user rather than total spending.

What are the best multi-cloud cost management tools for early-stage startups with limited budgets?

Early-stage startups should begin with free native tools from cloud providers: AWS Cost Explorer, Azure Cost Management, and Google Cloud Cost Management provide basic visibility without additional cost. As spending grows beyond $10,000-$20,000 monthly, invest in unified platforms like CloudHealth or Cloudability that aggregate data across clouds. For Kubernetes-focused startups, open-source tools like Kubecost provide excellent visibility at minimal cost. Many advanced platforms like ProsperOps and Cast AI offer free tiers or percentage-of-savings pricing models that align costs with value delivered.

Is multi-cloud strategy inherently more expensive than single-cloud deployment?

Multi-cloud strategies are typically 15-30% more expensive than optimized single-cloud deployments when factoring in management overhead, data transfer costs, and tool proliferation. However, this cost comparison misses important benefits: reduced vendor lock-in risk, improved negotiating leverage with cloud providers, access to best-of-breed services from different clouds, and enhanced resilience through geographic distribution. For many startups, these benefits justify the cost premium. The key is ensuring the premium remains in the 15-30% range through active optimization rather than allowing it to balloon to 50%+ through poor management.

How do AWS, Azure, and GCP pricing models compare for typical startup workloads?

Pricing comparisons are highly workload-dependent, but general patterns exist. AWS typically offers the most granular pricing with the widest selection of instance types, making it cost-effective when precisely matched to workload requirements. GCP often provides the lowest base pricing for compute resources and offers sustained use discounts automatically without commitments. Azure provides competitive pricing for Windows-based workloads and offers strong integration pricing benefits for Microsoft stack users. For typical startup workloads, pricing differences are usually within 10-20% when equivalent service tiers are compared.

What are the biggest mistakes startups make with multi-cloud spending?

The most common and costly mistakes include: implementing multi-cloud without clear strategic reasons, adding complexity without corresponding benefits; failing to establish cost visibility from day one, allowing spending to grow unchecked until budget crises force reactive cuts; overprovisioning resources across all clouds, resulting in 40-60% waste from idle capacity; neglecting data transfer costs during architecture design, leading to bill shock from egress fees; allowing teams to independently provision resources without central oversight, creating redundant services and fragmented spending; and treating cost optimization as a one-time project rather than an ongoing practice with dedicated ownership and regular reviews.