Cut Data Transfer and Storage Costs 50%

Reduce cloud data transfer and storage costs up to 50% through VPC endpoints, S3 lifecycle policies, compression, and intelligent tiering for cost-effective data management.

TL;DR

- Data transfer costs are hidden budget killers: Egress, cross-AZ, and internet transfer can reach 15-25% of cloud spend for data-intensive workloads.

- VPC endpoints eliminate NAT gateway charges: Gateway endpoints (S3/DynamoDB) and Interface endpoints (other services) keep traffic within AWS network, saving thousands monthly.

- S3 lifecycle policies tier storage automatically: Standard → Standard-IA (30 days, save 40-60%) → Glacier (90 days, save 70-80%) → Deep Archive (180 days, save 90%+).

- Compression reduces both storage and transfer: gzip/brotli shrinks text data 60-80%. Parquet/ORC + predicate pushdown cuts scan costs 80-90%.

- Intelligent Tiering automates optimization: Monitors access patterns, moves data automatically. Best for unknown or changing patterns.

Data transfer and storage cost optimization represents a critical cost optimization opportunity for cloud-native organizations. Strategic implementation of best practices can reduce expenses by 40-70% while maintaining performance and reliability. This guide explores proven strategies including resource optimization, automation, monitoring approaches, and architectural patterns that deliver measurable cost savings.

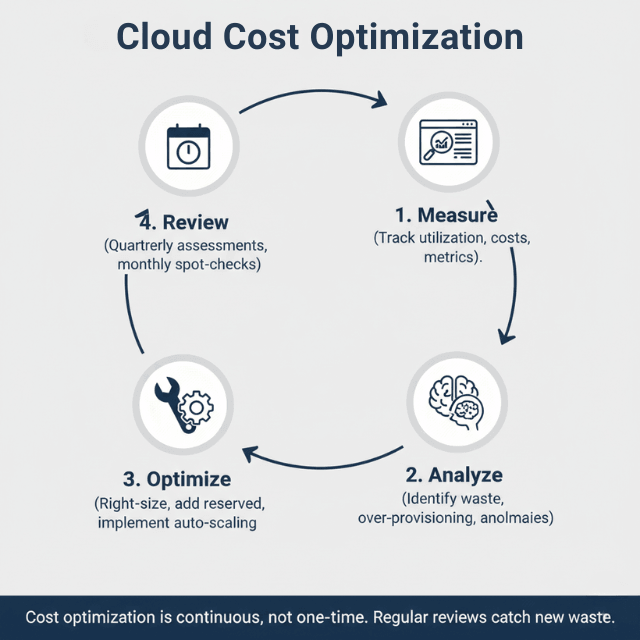

Cloud cost optimization requires systematic attention to resource provisioning, utilization monitoring, and continuous improvement processes. Organizations often discover significant waste through over-provisioned resources, idle capacity, and inefficient architectures (AWS Trusted Advisor identifies these). Modern cloud platforms provide powerful optimization tools, but successful implementation demands methodical analysis and incremental changes validated through metrics.

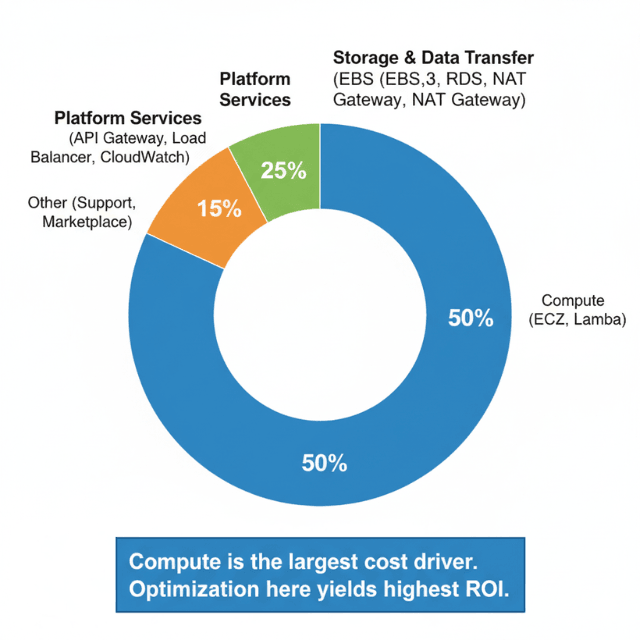

Understanding Cost Drivers

Primary cost drivers include compute resources consuming 40-60% of cloud budgets, storage and data transfer representing 20-30% of expenses, and various platform services contributing the remainder. Identifying specific cost sources enables targeted optimization efforts with maximum impact.

Resource over-provisioning stems from conservative capacity planning where teams allocate excess capacity without validation. Development environments often mirror production sizing despite lower requirements. Legacy migration patterns frequently perpetuate on-premises sizing without cloud-native optimization.

Hidden costs accumulate through data transfer fees, API requests, monitoring overhead, and backup storage. These seemingly minor expenses compound at scale, potentially representing 15-25% of total cloud spending for large deployments.

Optimization Strategies

Right-sizing resources prevents wasteful over-provisioning by matching instance types and sizes to actual workload requirements. Analyze utilization metrics over representative periods identifying instances running below 40% average utilization (AWS Compute Optimizer provides these recommendations). These represent downsizing opportunities where smaller configurations maintain adequate performance.

Automated scaling adjusts capacity dynamically based on demand, eliminating idle resources during low-traffic periods while maintaining performance during peaks. Configure auto-scaling policies with appropriate thresholds, cooldown periods, and scaling increments preventing both resource waste and performance degradation.

Reserved capacity and savings plans provide 40-70% discounts for predictable baseline workloads. Analyze historical usage patterns identifying stable capacity suitable for commitment-based pricing. Combine reserved capacity for baseline with on-demand or spot for variable load maximizing savings while maintaining flexibility.

Implementation Best Practices

Systematic review processes enable continuous optimization. Schedule quarterly assessments analyzing cost trends, utilization patterns, and optimization recommendations. Monthly spot-checks of highest-cost resources catch obvious inefficiencies early.

Tagging strategies enable accurate cost allocation by project, team, environment, or customer. Implement consistent tagging policies enforced through automation. Tag-based cost reports provide visibility into spending patterns supporting optimization prioritization and accountability.

Monitoring and alerting catch cost anomalies before significant budget impact. Configure budget thresholds with automated alerts at 80%, 90%, and 100% of planned spending. Anomaly detection identifies unusual patterns indicating configuration errors or unexpected usage growth.

Monitoring and Metrics

Track key performance indicators including total monthly cloud spending, cost per transaction or user, resource utilization percentages, and waste identified through optimization reviews. Establish baseline metrics enabling measurement of optimization progress over time.

Cost per unit metrics normalize spending against business outcomes. Calculate cost per customer, transaction, API call, or other relevant unit economics. This reveals whether cost growth aligns with business value or represents inefficiency requiring optimization.

Utilization dashboards visualize resource consumption across compute, storage, and platform services. Highlight under-utilized resources as optimization candidates. Track utilization trends ensuring optimizations don't overcorrect causing performance issues.

Conclusion

Effective cost optimization balances expense reduction against performance, reliability, and agility requirements. Systematic approaches achieve 40-70% savings through right-sizing, automated scaling, commitment-based discounts, and architectural improvements. Implement regular review cycles, comprehensive monitoring, and gradual changes validated through metrics.

Success requires ongoing discipline rather than one-time optimization projects, with continuous monitoring catching new inefficiencies as workloads evolve. Establish cost awareness as part of engineering culture where optimization considerations inform architectural decisions alongside functionality and performance requirements.

Frequently Asked Questions

How much cost reduction is realistic through optimization?

Most organizations achieve 40-60% reduction through systematic optimization. Exact savings depend on current efficiency levels, with poorly optimized environments offering greater improvement potential. Even well-managed clouds typically find 20-30% savings through continuous optimization practices.

How often should I review and optimize cloud costs?

Conduct detailed quarterly reviews analyzing trends, utilization patterns, and optimization recommendations. Implement monthly spot-checks of highest-cost resources. Establish automated monitoring and alerting for continuous anomaly detection between formal review cycles.

What metrics should I track to measure optimization success?

Track total monthly cloud spending, cost per business unit (transaction, user, customer), resource utilization percentages, and savings realized from optimization initiatives. Monitor trends over time validating that optimization efforts deliver sustained cost reduction without performance degradation.

Summarize this post with:

Ready to put this into production?

Our engineers have deployed these architectures across 100+ client engagements — from AWS migrations to Kubernetes clusters to AI infrastructure. We turn complex cloud challenges into measurable outcomes.