Edge Computing and Its Impact on SaaS Performance

Understand edge computing's impact on SaaS performance. Learn edge platforms (Cloudflare Workers, Lambda@Edge), use cases, data management, and architectural patterns for sub-10ms latency.

TL;DR

- Edge computing moves computation to users: Instead of centralized data centers, process requests at hundreds of edge locations worldwide. Reduces latency from 200+ms to single-digit milliseconds for global users.

- Not all workloads fit the edge: Edge excels at stateless, quick operations—authentication, A/B testing, rate limiting, image transformation. Stateful, database-heavy operations remain in centralized backends.

- Major edge platforms:

- Cloudflare Workers: JavaScript at 300+ locations, fast cold starts, KV storage for edge data.

- Lambda@Edge / CloudFront Functions: AWS edge compute, integrated with CloudFront.

- Vercel Edge Functions: Seamless Next.js integration, global by default.

- Fastly Compute: WebAssembly-based, sub-millisecond execution.

- Edge data is eventually consistent: Use edge KV stores for session data, config, and cached content—fast reads, but writes propagate with delay. For strong consistency, use Durable Objects or route to origin.

- Security happens at the perimeter: Validate JWTs, enforce rate limits, block malicious patterns at edge before they reach origin. This reduces backend load and attack surface.

- Measure edge effectiveness with RUM: Real User Monitoring reveals actual latency per location. Track cache hit ratios, cold start frequency, and time-to-first-byte from user perspective.

Edge computing moves computation closer to users. Instead of routing every request to centralized data centers, edge locations around the world handle requests locally.

For SaaS applications serving global users, edge computing can dramatically reduce latency. Understanding what runs well at the edge—and what doesn't—enables architectures that combine edge performance with centralized reliability.

Understanding Edge Computing

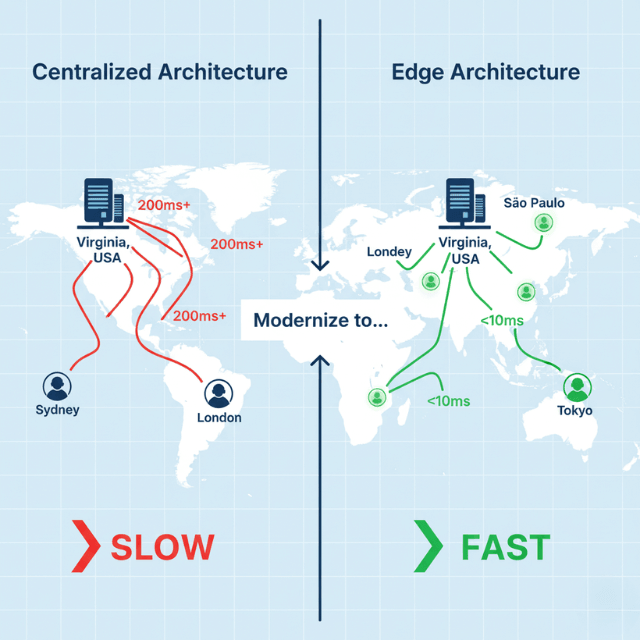

Traditional cloud architecture routes requests to centralized data centers. Users in Sydney connecting to servers in Virginia experience 200+ milliseconds of network latency. This latency is physics—light travels only so fast.

Edge computing distributes processing to locations near users. Edge nodes exist in dozens or hundreds of cities worldwide. Requests travel shorter distances, reducing latency to single-digit milliseconds.

CDN edge locations already serve static content globally. Edge computing extends this model to dynamic computation. Run code, not just cache content, at the edge.

Not all workloads suit edge deployment. Stateless, quick operations work well. Stateful, database-heavy operations face challenges. The edge excels at request manipulation, authentication, and caching logic.

Edge locations have constraints. Limited compute resources. No persistent storage. Restricted execution time. Designs must accommodate these limitations.

The tradeoff is complexity for latency. Simple architectures with centralized backends are easier to build and debug. Edge architectures add deployment complexity and debugging challenges. The latency benefit must justify the complexity cost.

Edge Platforms and Services

Cloudflare Workers run JavaScript at 300+ edge locations. V8 isolates provide fast cold starts and efficient resource sharing. Workers handle billions of requests daily.

// Cloudflare Worker example

export default {

async fetch(request, env) {

const url = new URL(request.url);

// Edge-side authentication check

const token = request.headers.get('Authorization');

if (!await validateToken(token, env.AUTH_SECRET)) {

return new Response('Unauthorized', { status: 401 });

}

// Route to appropriate backend

if (url.pathname.startsWith('/api/')) {

return fetch(env.API_ORIGIN + url.pathname);

}

return fetch(env.STATIC_ORIGIN + url.pathname);

}

};

AWS CloudFront Functions and Lambda@Edge provide edge compute. CloudFront Functions run sub-millisecond operations. Lambda@Edge runs more complex logic with regional execution.

Vercel Edge Functions deploy globally by default. Next.js applications can specify edge runtime for individual routes. Seamless integration with the Vercel platform.

Deno Deploy runs TypeScript at the edge. Built on Deno runtime with modern JavaScript APIs. Strong developer experience for edge development.

Fastly Compute runs WebAssembly at edge locations. Language flexibility through Wasm compilation. Sub-millisecond cold starts and request handling.

Each platform has distinct capabilities. Execution time limits vary. Available APIs differ. Storage and state options vary significantly. Choose based on requirements.

Use Cases for Edge Computing

A/B testing and feature flags evaluate at the edge. Make experiment decisions close to users. Reduce latency of experiment assignment.

// A/B test at edge

async function assignExperiment(request, variants) {

const userId = request.headers.get('X-User-ID');

const hash = await hashUserId(userId);

const bucket = hash % 100;

if (bucket < 50) {

return variants.control;

}

return variants.treatment;

}

Geolocation-based routing happens instantly at edge. Route users to regional backends. Show location-specific content without backend round-trips.

Authentication and authorization at edge blocks unauthorized requests early. Validate JWTs at the edge. Reject invalid requests before they reach origin.

Rate limiting at edge protects backends efficiently. Track request counts in edge KV storage. Block excessive requests at the perimeter.

Image optimization and transformation runs at edge. Resize images based on device. Convert formats based on browser support. Cache results at edge locations.

API response caching with edge logic enables smart caching. Cache based on complex rules. Invalidate selectively. Serve personalized cached responses.

Bot detection and filtering at edge reduces origin load. Identify bot traffic patterns. Challenge suspicious requests. Block known malicious actors.

Edge Data and State Management

Edge KV storage provides eventually consistent key-value access.

Reads are fast from edge locations. Writes propagate globally with some delay.

// Cloudflare KV usage

export default {

async fetch(request, env) {

const key = request.headers.get('X-Session-ID');

const session = await env.SESSIONS.get(key, 'json');

if (!session) {

return new Response('Session not found', { status: 401 });

}

return new Response(JSON.stringify(session));

}

};

Durable Objects provide strongly consistent edge storage. Coordination and state management in specific locations. Useful for collaborative features and session affinity.

Read-through caching patterns work well at edge. Check edge cache first. Fetch from origin on miss. Cache response for future requests.

Write patterns require careful design. Edge can't maintain strong consistency for writes. Queue writes to central systems. Accept eventual consistency.

// Queue writes for eventual consistency

async function handleWrite(request, env) {

const data = await request.json();

// Immediate edge storage for reads

await env.KV.put(`user:${data.id}`, JSON.stringify(data));

// Queue to central database

await env.QUEUE.send({ type: 'USER_UPDATE', data });

return new Response('OK');

}

Session data at edge reduces origin calls. Store session in edge KV after authentication. Subsequent requests read from edge.

Prefetching and precomputing at edge anticipates needs. Warm caches during low-traffic periods. Compute personalization data in advance.

Edge Security Considerations

JWT validation at edge requires key management. Distribute public keys to edge locations. Handle key rotation gracefully.

HTTPS termination happens at edge. Certificates managed by edge platform. Origin connections can use simpler encryption.

DDoS protection is inherent at edge. Distributed edge absorbs attack traffic. Attack traffic stays far from origin.

WAF rules execute at edge. Block malicious patterns before reaching backend. Reduce origin exposure to attacks.

// Simple WAF logic at edge

function blockSuspiciousRequests(request) {

const url = request.url.toLowerCase();

const patterns = [

/\.\.\//, // Path traversal

/<script/i, // XSS attempt

/union.*select/i, // SQL injection

];

for (const pattern of patterns) {

if (pattern.test(url)) {

return true;

}

}

return false;

}

Secrets management at edge uses platform-specific approaches. Environment variables or secret stores. Never embed secrets in edge code.

Origin authentication ensures only edge makes origin requests. Shared secrets or signed requests verify edge origin.

Performance Measurement at the Edge

Edge latency differs from origin latency. Measure time-to-first-byte from user perspective. Edge latency should be dramatically lower.

Real User Monitoring captures actual edge performance. Lab tests from one location miss global variation. RUM shows performance across all edge locations.

Edge log analysis reveals performance patterns. Identify slow edge locations. Spot cache miss rates by region.

// Log performance data

addEventListener('fetch', event => {

const start = Date.now();

event.respondWith(

handleRequest(event.request).then(response => {

const duration = Date.now() - start;

console.log(JSON.stringify({

path: new URL(event.request.url).pathname,

duration,

cacheStatus: response.headers.get('cf-cache-status'),

location: event.request.cf?.colo

}));

return response;

})

);

});

Cache hit ratios determine edge effectiveness. High cache hits mean edge serves most requests. Low hits mean excessive origin traffic.

Cold start frequency affects perceived performance. Track how often cold starts occur. Optimize for warm edge instances.

Origin latency still matters. Cache misses go to origin. Origin performance affects edge cache miss latency.

Architectural Patterns

Edge-first architecture maximizes edge computation. Handle as much as possible at edge. Origin serves only what edge cannot.

Tiered caching uses edge and origin caches. Edge cache for hot content. Origin cache for warm content. Database for cold content.

User → Edge Cache → Origin Cache → Database

↓ ↓ ↓

(fastest) (fast) (authoritative)

API gateway pattern at edge handles routing. Authenticate at edge. Route to appropriate backends. Transform requests and responses.

Static generation with edge personalization combines patterns. Pre-render pages at build time. Personalize at edge with user context.

Streaming responses from edge provide perceived performance. Start sending response immediately. Stream additional content as available.

Fallback patterns handle edge failures. If edge computation fails, fall back to origin. Maintain reliability despite edge complexity.

Conclusion

Edge computing transforms the latency equation for global SaaS. By pushing computation to the network's edge, you serve users from locations physically close to them—not from a handful of distant data centers. The performance improvement is dramatic: 200ms round trips become 10ms. But edge isn't a silver bullet.

It's a tool for specific workloads: stateless, cacheable, or security-related operations. The most effective architectures are edge-first but origin-aware: handle what you can at the edge, defer what you must to the center, and design for graceful fallback when edge isn't enough.

As edge platforms mature, with better storage, longer execution windows, and richer APIs, the boundary between edge and cloud continues to blur. The winners will be those who understand where edge adds value—and where it adds only complexity.

Frequently Asked Questions

When should I NOT use edge computing?

Avoid edge for:

- Strongly consistent writes (e.g., financial transactions, inventory updates) that require immediate global consistency.

- Long-running computations exceeding platform limits (most edge functions cap at 10-50ms or 5-30 seconds depending on platform).

- Workloads requiring large local datasets—edge storage is limited and eventually consistent.

- Simple architectures where complexity cost outweighs latency benefit. If your users are regional, not global, centralized may be simpler.

How do I manage state across edge locations?

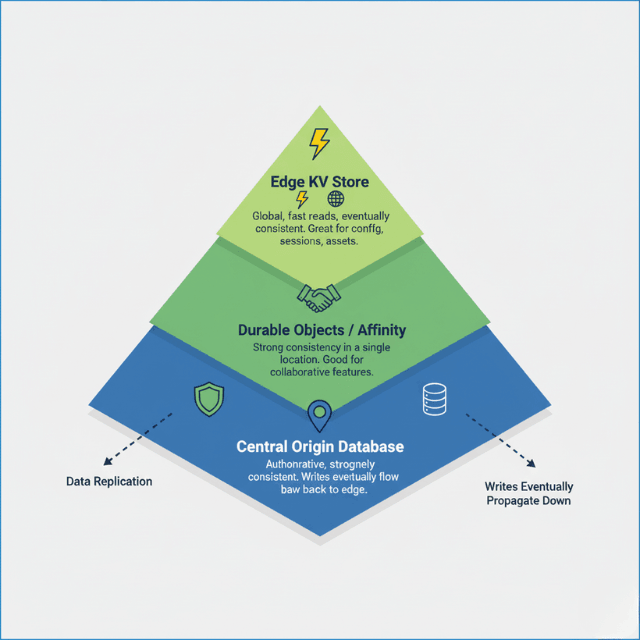

Use a layered approach:

- Edge KV for global, eventually consistent data (config, feature flags, session data with TTL).

- Durable Objects (Cloudflare) for strongly consistent coordination in specific locations.

- Origin database for authoritative state, with edge caching for reads.

- Write-through patterns: Write to origin asynchronously via queues, serve from edge cache immediately with eventual consistency.

How do I measure if edge is actually improving performance?

Implement Real User Monitoring (RUM) with location-aware analytics. Key metrics:

- Time-to-First-Byte (TTFB) by user region—compare edge vs. origin routes.

- Cache hit ratio at edge—high ratios indicate edge is serving requests effectively.

- Cold start frequency—if edges are warming consistently.

- Error rates at edge vs. origin—edge failures should trigger fallbacks, not user-facing errors.

A/B test edge vs. origin routing for a subset of users to quantify real-world impact.

Summarize this post with:

Ready to put this into production?

Our engineers have deployed these architectures across 100+ client engagements — from AWS migrations to Kubernetes clusters to AI infrastructure. We turn complex cloud challenges into measurable outcomes.