Building Modern Cloud-Native Applications

This guide covers the Twelve-Factor methodology, containerization, Kubernetes orchestration, and production-ready strategies that unlock cloud's full potential.

Introduction

Cloud-native development builds applications designed specifically for cloud environments, not just deployed to them. Organizations adopting cloud-native technologies report 50-70% faster time-to-market and 44% better resource utilization. This guide covers the Twelve-Factor methodology, containerization, Kubernetes orchestration, and production-ready strategies that unlock cloud's full potential.

Understanding Cloud-Native Principles

The Cloud Native Computing Foundation defines cloud-native as technologies that enable scalable applications in modern, dynamic environments. Five core characteristics define cloud-native applications.

Containerization packages applications with dependencies in portable units that run consistently across environments. Dynamic Orchestration automates container lifecycle management through platforms like Kubernetes. Microservices Architecture breaks applications into loosely coupled, independently deployable services. Declarative APIs define infrastructure and applications as version-controlled code. Automation eliminates manual operations, enabling self-healing and continuous deployment.

Cloud-native applications embrace immutability and stateless design. Containers are replaced rather than modified. Services expect failures and implement circuit breakers, retries, and graceful degradation. This approach transforms infrastructure from fixed assets into programmable resources that scale automatically based on demand.

The shift from cloud-enabled (lift-and-shift) to cloud-native is fundamental. Cloud-enabled applications simply move to cloud VMs with minimal changes. Cloud-native applications leverage platform services, auto-scaling, and microservices to achieve resilience impossible in traditional architectures.

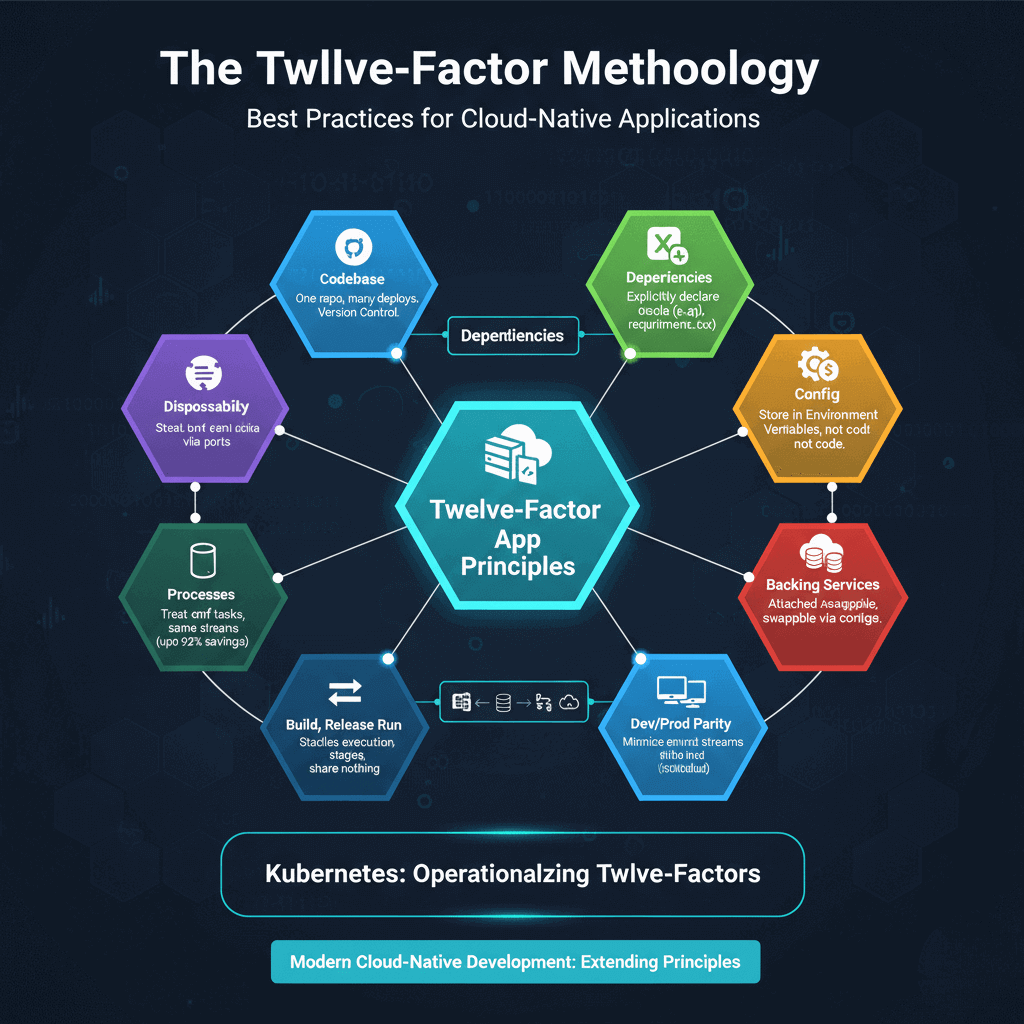

The Twelve-Factor Methodology

The Twelve-Factor App methodology defines best practices for cloud-native applications. Created by Heroku engineers, it addresses configuration management, dependency isolation, and operational scalability.

Critical factors include maintaining one codebase tracked in version control with many deployments, explicitly declaring dependencies in manifest files (requirements.txt, package.json), storing configuration in environment variables never in code, and treating backing services as attached resources swappable via configuration.

Build, release, run strictly separates stages. The build stage converts code to executable bundles. The release stage combines builds with environment-specific configuration. The run stage executes applications without code changes.

Operational factors emphasize stateless processes that store nothing locally, port binding where applications export services via ports, and concurrency through horizontal scaling. Applications must start quickly and shut down gracefully. Dev/prod parity minimizes environment differences using the same databases and services everywhere.

Logging and administration treat logs as event streams written to stdout, never managing log files. Admin processes run as one-off tasks in the same environment using the same codebase.

Modern cloud-native development extends these principles with API-first design, comprehensive telemetry, and security by default. Kubernetes operationalizes Twelve-Factor principles through ConfigMaps, Secrets, horizontal pod autoscaling, and declarative deployments.

Container-First Development and Kubernetes

Containers solve environment consistency by bundling applications with dependencies into immutable images. Docker became the standard, with images built via Dockerfiles defining base images, dependency installation, and execution commands.

Best practices include multi-stage builds separating compilation from runtime, minimal base images using Alpine or distroless variants, and running as non-root users. Images should use specific version tags, not latest, and undergo vulnerability scanning before deployment.

Kubernetes has become the de facto orchestration platform, providing automated deployment, scaling, and self-healing. Core concepts include Pods (smallest deployable units), Deployments (managing replicas and updates), Services (network endpoints), and ConfigMaps/Secrets (configuration management).

Managed Kubernetes services simplify operations. If you're optimizing Kubernetes performance for ML and AI workloads, read our detailed guide on best ML/AI tools for optimizing Kubernetes resources.

AWS EKS, Azure AKS, and Google GKE handle control planes while providing cloud-native integrations. These services reduce operational burden while maintaining Kubernetes portability.

Kubernetes benefits include declarative configuration where desired state is specified and controllers maintain it continuously, self-healing that restarts failed containers and reschedules pods from failed nodes, horizontal scaling based on metrics, and rolling updates enabling zero-downtime deployments. This orchestration transforms infrastructure management from manual processes into automated, self-maintaining systems.

Serverless and Event-Driven Architectures

Serverless computing removes infrastructure management, charging only for execution time. AWS Lambda, Azure Functions, and Google Cloud Functions auto-scale from zero to thousands of concurrent executions.

Ideal use cases include event-driven processing, API backends with variable traffic, scheduled tasks, and data transformation pipelines. Serverless excels for workloads with unpredictable patterns or long idle periods. Limitations include execution time limits (15 minutes for AWS Lambda), cold start latency, and stateless execution requiring external state management.

Event-driven architecture decouples producers from consumers through message queues and event streams. Services emit events about state changes without knowing which consumers process them. This pattern enables multiple consumers reacting independently to events, supporting complex workflows without tight coupling.

Modern event streaming platforms like Apache Kafka and AWS Kinesis provide durable, ordered event logs enabling real-time processing and historical replay. Event-driven patterns include Event Notification for state changes, Event-Carried State Transfer where events contain complete state, and Event Sourcing storing all state changes as immutable event sequences.

Combining serverless with event-driven architecture creates highly scalable, cost-effective systems. Lambda functions triggered by queue messages or event streams process workloads elastically, paying only for actual consumption while automatically handling traffic spikes.

Cloud-Native Observability

Cloud-native applications require comprehensive observability across distributed services. The three pillars—metrics, logs, and traces—provide complementary visibility.

Metrics quantify system behavior through time-series data. Prometheus has become the standard for cloud-native metrics, using a pull model to scrape endpoints exposed by applications. Kubernetes service discovery automatically finds targets. Key metrics include request rates, error rates, and latency distributions following the RED method.

Logs provide detailed event records. Structured logging using JSON enables efficient querying and correlation. Centralized aggregation via Fluentd or Loki collects logs from distributed containers, enriching them with Kubernetes metadata. Including trace IDs in log entries enables correlating logs with specific distributed transactions.

Distributed tracing tracks requests flowing through microservices. Each trace receives a unique ID propagated across service calls. OpenTelemetry provides vendor-neutral instrumentation, and Jaeger or Tempo visualize complete request paths. Tracing reveals performance bottlenecks, service dependencies, and error propagation impossible to understand from metrics alone.

OpenTelemetry has emerged as the standard for instrumentation, providing consistent APIs across languages and enabling sending data to any compatible backend. This vendor neutrality prevents lock-in while future-proofing observability implementations.

Deployment Strategies and GitOps

Progressive delivery minimizes deployment risk through gradual rollouts. Canary deployments route small traffic percentages to new versions, monitoring metrics before full rollout. Blue-green deployments maintain two complete environments, switching traffic atomically and enabling instant rollback.

GitOps treats Git repositories as the single source of truth for both application and infrastructure configuration. Tools like ArgoCD and Flux continuously monitor Git repos and automatically synchronize cluster state. Changes go through pull requests with reviews and automated testing before merging.

GitOps benefits include complete audit trails through Git history, easy rollback by reverting commits, and configuration drift detection with automatic remediation. Deployment agents run inside clusters pulling approved changes, improving security by eliminating external access to production environments.

Infrastructure as Code using Terraform, Pulumi, or Crossplane provisions cloud resources through declarative configurations. IaC pipelines preview changes before applying them, enabling teams to understand infrastructure impacts before deployment. Combining IaC with GitOps creates end-to-end automation from cloud resources to application deployment.

Conclusion

Cloud-native development transforms how organizations build and operate software. The Twelve-Factor methodology, containerization, Kubernetes orchestration, serverless patterns, and comprehensive observability create systems that are scalable, resilient, and cost-effective.

Success requires embracing automation, treating infrastructure as code, and designing for failure from the start. Organizations implementing cloud-native practices achieve faster delivery, better resource efficiency, and unlimited scale. Start with containerization, adopt Twelve-Factor principles, implement observability, and progressively add capabilities as expertise grows.

The future of software is cloud-native. Companies adopting these practices gain competitive advantages through operational excellence that directly impacts business outcomes.