Reduce AWS Machine Learning Costs by 70%

Optimize AWS AI/ML costs with proven strategies for training and inference. Reduce machine learning expenses by 40-70% while maintaining performance and scalability.

Introduction

AWS machine learning costs can spiral quickly without strategic management. Organizations waste 30-40% of their ML spending on overprovisioned instances, idle endpoints, and inefficient workflows. Unlike traditional applications, machine learning workloads present unique cost challenges with compute-intensive training jobs and unpredictable inference demand.

This guide reveals proven strategies to reduce AWS machine learning costs by 40-70% through right-sizing instances, leveraging Spot pricing, optimizing storage, and implementing FinOps practices. Whether you're running large-scale model training or deploying production inference endpoints, these strategies will transform your ML economics.

Understanding AWS ML Cost Drivers

Training costs represent the most significant expense in AI workloads. GPU-accelerated instances like P4, P5, and Trainium Trn1n require substantial investment. A single large language model training run can consume thousands of GPU-hours, translating to tens of thousands of dollars. The iterative nature of ML development compounds this expense as data scientists run hundreds of experiments.

Inference costs often catch organizations off guard. Production ML systems serve predictions continuously, and poorly optimized inference infrastructure can exceed training costs over time. SageMaker endpoints running 24/7 accumulate charges whether they're serving predictions or sitting idle. An ml.m5.xlarge endpoint costs $0.269 per hour or about $193 monthly—multiply this across dozens of endpoints and costs balloon quickly.

Data storage and transfer costs accumulate through S3 storage for training datasets, retrieval fees, and transfer charges. Training datasets for computer vision or NLP can consume terabytes of S3 storage. While S3 storage seems inexpensive at $0.023 per GB monthly, 100 TB costs $2,300 monthly. Cross-region data transfer costs $0.02 per GB, and egress to the internet reaches $0.09 per GB.

AWS managed services like SageMaker add approximately 20-30% to underlying EC2 instance costs for convenience and productivity benefits. While worthwhile for small teams, at scale organizations can save significantly by migrating to self-managed solutions.

Optimizing Training Costs

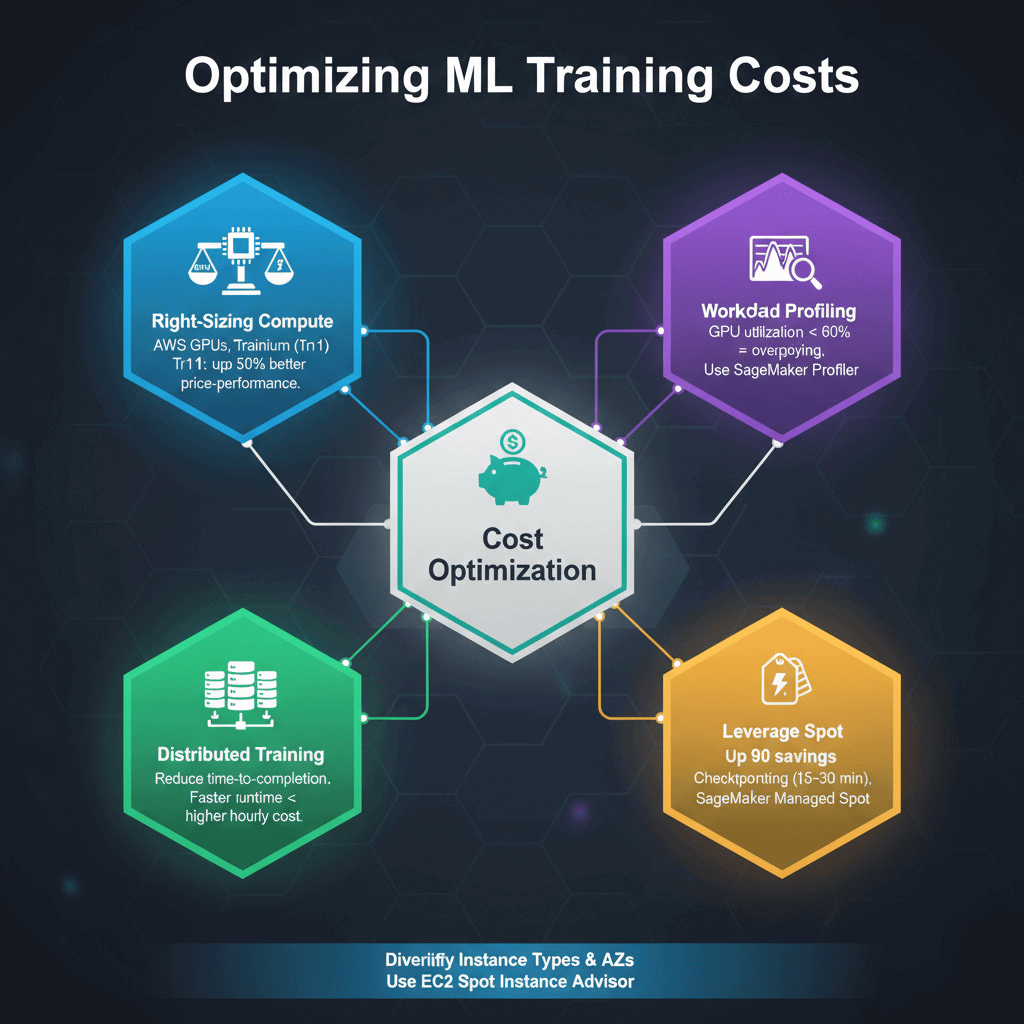

Right-sizing compute resources forms the foundation of training optimization. AWS offers GPU instances (P4, P5, G5), Trainium chips (Trn1), and general-purpose instances. The price-performance ratio varies dramatically. Trn1 instances offer up to 50% better price-performance than comparable GPU instances for certain workloads.

Profiling training workloads reveals whether you're compute-bound, memory-bound, or I/O-bound. Many models don't require the most expensive instances. Use SageMaker Profiler to identify bottlenecks. If GPU utilization hovers below 60%, you're overpaying for unused capacity.

Distributed training can paradoxically reduce costs by decreasing time-to-completion. While using four instances quadruples hourly costs, if distributed training cuts runtime by 75%, you achieve the same results at lower total cost.

Spot Instances offer the most dramatic savings for ML training—up to 90% discounts compared to on-demand pricing. SageMaker Managed Spot Training automates checkpointing so interrupted jobs resume rather than restart. Most training jobs complete successfully even with interruptions, achieving 60-90% cost savings.

Diversify across multiple instance types and availability zones to minimize interruption impact. Use EC2 Spot Instance Advisor to identify instance types with low interruption rates. Implement robust checkpointing every 15-30 minutes to minimize progress loss.

Reducing Inference Costs

Inference workloads have different requirements than training, enabling use of specialized, cost-optimized instances. AWS Inferentia-based Inf1 and Inf2 instances deliver up to 70% lower cost per inference compared to GPU instances. Use SageMaker Neo to compile models for Inferentia.

For GPU-based inference, G5 instances provide better price-performance than training-optimized P4/P5 instances. Profiling reveals that inference typically requires lower compute than training. An ml.g4dn.xlarge at $0.736/hour often suffices where teams deployed ml.p3.2xlarge at $3.06/hour—a 75% cost reduction.

SageMaker Serverless Inference transforms cost structure for sporadic traffic. You're charged per millisecond of compute time used instead of paying for always-on capacity. For applications with irregular traffic, serverless inference can reduce costs by 90% compared to dedicated endpoints.

Auto-scaling prevents paying for idle capacity during traffic troughs. Configure target tracking policies based on invocations per instance or model latency. Set aggressive scale-in cooldown periods to quickly reduce capacity when traffic drops.

Multi-model endpoints (MMEs) serve multiple models on shared infrastructure. Instead of separate endpoints for each model, MMEs load models dynamically from S3 as needed. For 100 models needing ml.m5.large endpoints at $98.67/month each, traditional deployment costs $9,867 monthly. With MMEs sharing 2-3 endpoints, costs drop to $200-300 monthly—a 97% savings.

Storage and Data Optimization

S3 offers multiple storage tiers with dramatically different pricing. S3 Intelligent-Tiering automatically moves objects between access tiers based on usage patterns, ideal for ML datasets with changing access frequency.

For archival data, S3 Glacier Instant Retrieval offers 68% cost savings ($0.004/GB/month) with millisecond retrieval. Implement lifecycle policies to automatically transition data through storage tiers as it ages.

Convert datasets to compressed columnar formats like Apache Parquet or ORC, reducing storage costs by 50-80% and accelerating data loading. For image datasets, consider WebP or JPEG with appropriate quality settings.

EBS volumes used for training jobs continue accumulating charges after jobs complete if not cleaned up. Implement automation to delete EBS volumes when training jobs finish. Use lifecycle tags to identify orphaned volumes.

Minimize data transfer between regions and availability zones. Ensure training instances, S3 buckets, and data sources reside in the same region to eliminate cross-region transfer charges.

Model Optimization Techniques

Model size directly correlates with inference costs through memory requirements and compute intensity. Quantization reduces model precision from FP32 to FP16 or INT8, cutting model size by 50-75% with minimal accuracy loss. SageMaker Neo automates quantization and optimization.

Model pruning removes redundant parameters, creating smaller models that maintain accuracy while reducing computational requirements. Distillation transfers knowledge from large teacher models to smaller student models, achieving similar performance with 5-10x fewer parameters.

Implement prediction caching for use cases with repeat queries. Using ElastiCache to store recent predictions eliminates redundant inference calls, reducing costs by 30-70% for applications with high query repetition.

Leveraging AWS Pricing Models

AWS Savings Plans offer significant discounts (up to 72% for 3-year commitments) in exchange for consistent usage. SageMaker Savings Plans specifically target SageMaker instance usage. Analyze 3-6 months of historical usage to identify baseline capacity suitable for commitments.

Start with conservative commitments covering 40-60% of average usage to capture savings while maintaining flexibility. The flexibility of Compute Savings Plans makes them attractive—they apply across instance families, sizes, regions, and operating systems.

Reserved Instances provide deeper discounts (up to 75% for 3-year standard RIs) but with less flexibility. RIs are suitable for highly stable, long-running workloads like production inference endpoints that won't change instance types.

Monitoring and Governance

AWS Cost Explorer provides detailed visibility into spending patterns. Implement tagging strategies across ML resources with project, team, environment, and model tags. Cost allocation tags enable filtering and grouping expenses.

AWS Budgets enables proactive cost management through customizable alerts. Create budgets for overall ML spending and configure alerts at 50%, 75%, and 100% thresholds. Budget actions can automatically stop non-critical endpoints when spending thresholds are exceeded.

AWS Cost Anomaly Detection uses machine learning to identify unusual spending patterns. It learns normal spending behaviors and alerts you to anomalies like sudden cost increases.

Regular resource audits identify waste. AWS Trusted Advisor automatically scans for idle resources, including underutilized SageMaker endpoints and unattached EBS volumes. Many organizations discover 15-30% cost reduction potential from eliminating forgotten resources.

Implementing FinOps for ML

FinOps brings engineering, finance, and operations teams together for data-driven spending decisions. Establish cost ownership by assigning monthly budgets to engineering teams. When data scientists see costs of their experiments, behavior changes naturally.

Track KPIs that balance model performance and cost: cost per training job, cost per 1000 inferences, and accuracy per dollar spent. A model with 98% accuracy at $10,000/month may be less valuable than a 96% accurate model at $2,000/month.

Implement cost estimation and approval workflows for expensive experiments. Before launching large-scale training jobs, require cost estimates for jobs exceeding thresholds. Use AWS Pricing Calculator or historical data.

Develop cost-efficient experiment strategies through staged model development. Start with small dataset samples and cheap instances for rapid prototyping. Only scale to full datasets and expensive instances after validating approaches.

Advanced Optimization Strategies

Lambda offers compelling alternatives for lightweight inference workloads with pay-per-request pricing. For models under deployment package size limits, Lambda costs pennies per million requests. A function with 1GB memory executing for 200ms costs $0.0000033 per invocation.

Edge inference using AWS IoT Greengrass or SageMaker Edge deploys models to edge devices, reducing cloud inference costs to zero for processed requests. Hybrid architectures use local inference for simple predictions and cloud inference for complex scenarios.

AutoML and model compression through SageMaker Autopilot discover efficient models that balance accuracy and computational efficiency. Autopilot explores architectures, finding solutions that require 30-60% less compute than manually designed alternatives.

Conclusion

Optimizing AWS machine learning costs requires systematic attention to training optimization, inference right-sizing, storage management, and FinOps practices. Organizations typically achieve 40-70% cost reductions through the strategies outlined in this guide. Start with high-impact changes like using Spot Instances for training, right-sizing inference endpoints, and implementing S3 lifecycle policies. These quick wins demonstrate value and build momentum for advanced optimizations like multi-model endpoints and serverless inference. Remember that cost optimization is not about minimizing spending at all costs—it's about maximizing business value per dollar invested. By implementing monitoring, governance, and continuous improvement practices, you'll build ML systems that scale economically with your business, transforming cost management from a reactive fire drill into a proactive competitive advantage.

Frequently Asked Questions

How much can I realistically save on AWS machine learning costs?

Organizations typically achieve 40-70% cost reductions through systematic optimization. Quick wins like eliminating idle endpoints and using Spot Instances for training often yield 30-40% savings within the first month. Comprehensive optimization including inference optimization, storage lifecycle management, and pricing model selection can reach 70-80% total cost reduction. The key is addressing multiple cost drivers—training, inference, storage, and data transfer optimization compound for maximum impact.

Should I use SageMaker or self-managed EC2 instances for cost efficiency?

For small-to-medium workloads under $10,000-20,000 monthly, SageMaker's productivity benefits typically justify the 20-30% managed service premium. The integrated tooling and reduced operational burden save engineering time. At larger scale over $50,000 monthly, self-managed solutions on EC2 or EKS can justify the operational complexity through significant savings. However, factor in engineering time required to build and maintain custom ML infrastructure—these opportunity costs often exceed the savings.

What are the best AWS instances for cost-effective machine learning inference?

For deep learning inference, AWS Inferentia-based Inf1 and Inf2 instances offer the best price-performance, delivering up to 70% lower cost per inference than GPU alternatives. Use SageMaker Neo to compile models for Inferentia. For GPU-based inference, G5 instances balance cost and performance. For simpler models, CPU-based instances like M6g (Graviton) or M5 often suffice at a fraction of GPU costs. The best choice depends on model architecture, latency requirements, and throughput needs.

How do Spot Instances work for ML training and what are the risks?

Spot Instances provide spare AWS capacity at 60-90% discounts with the trade-off that they can be interrupted with two-minute warning. For ML training, this is excellent when using proper checkpointing. SageMaker Managed Spot Training automates checkpoint management—interrupted jobs resume from the last checkpoint. The main risk is job delays when Spot capacity is limited, but most training jobs complete successfully. For time-sensitive training, combine Spot with on-demand fallback.

What cost optimization should I prioritize first for AWS machine learning?

Start with identifying and eliminating waste: idle SageMaker endpoints, orphaned EBS volumes, and unnecessary S3 storage. Use AWS Trusted Advisor to find these opportunities. Next, implement Spot Instances for training workloads—this often represents 40-60% of ML compute costs and is straightforward to optimize. Third, optimize inference endpoints through right-sizing, auto-scaling, or serverless alternatives. These three areas typically represent 70-80% of optimization potential and can be addressed in the first 30-60 days.