OCI vs AWS vs Azure: Real Cost Comparison

Compare Oracle Cloud, AWS, and Azure costs for LLM deployments. Detailed analysis shows OCI 40-70% savings on A100 GPUs plus scenarios where AWS delivers better value.

TLDR;

- OCI H100 instances cost 58% less than AWS and 57% less than Azure per GPU-hour

- 10TB free monthly egress versus $0.09/GB on AWS saves $900/month for high-traffic APIs

- AWS wins for A10-class GPUs at 33% lower cost than OCI

- Large 8-GPU deployments on OCI run $18,337/month versus $29,533 on AWS

Compare Oracle Cloud Infrastructure costs against AWS and Azure for LLM deployments. This detailed analysis reveals where OCI delivers 40-70% savings on GPUs, storage, and networking while identifying scenarios where AWS or Azure provide better value for specific workload patterns.

Cloud costs accumulate rapidly at production scale. A single A100 GPU runs continuously for $2,000-3,000 monthly across major providers. Scale to 10 GPUs and monthly spend reaches $20,000-30,000. Small pricing differences compound quickly into substantial annual savings or overruns.

This comparison uses apples-to-apples configurations with identical GPU types, US East regions, and on-demand pricing for January 2025. Learn which provider offers best value for different model sizes, when to choose each platform, and hidden costs that impact total cost of ownership beyond headline instance pricing.

Why Cloud Cost Matters for LLM Deployments

LLM inference costs accumulate fast at production scale. A single A100 GPU runs 24/7 for $2,000-3,000 monthly. Scale to 10 GPUs and you're spending $20,000-30,000 per month. Over 12 months, that's $240,000-360,000 just for compute.

Small pricing differences compound quickly. A 20% cost advantage saves $48,000 annually on a 10-GPU deployment. A 50% advantage saves $120,000. These savings fund additional infrastructure, engineering talent, or model improvements.

Cloud providers use different pricing strategies. AWS optimizes for flexibility with per-second billing. Azure bundles services with enterprise agreements. Oracle focuses on price-performance leadership for compute-intensive workloads.

This analysis compares apples-to-apples configurations: same GPU type, same region (US East), same commitment period. Prices are January 2025 on-demand rates without reserved instances or enterprise discounts.

GPU Instance Pricing Comparison

Direct GPU cost comparison across clouds.

A10 GPU Instances (24GB VRAM)

Oracle Cloud (VM.GPU.A10.1):

- Configuration: 1x A10, 15 vCPU, 240GB RAM

- On-demand: $1.50/hour ($1,095/month)

- Monthly commit: $1.35/hour ($986/month)

AWS (g5.xlarge):

- Configuration: 1x A10, 4 vCPU, 16GB RAM

- On-demand: $1.006/hour ($734/month)

- 1-year reserved: $0.605/hour ($442/month)

Azure (NC6s_v3):

- Configuration: 1x V100 (16GB), 6 vCPU, 112GB RAM

- On-demand: $3.06/hour ($2,234/month)

- 1-year reserved: $1.938/hour ($1,415/month)

Winner: AWS - 33% cheaper than OCI, 67% cheaper than Azure for A10-class GPUs.

Note: AWS g5 instances include less CPU/RAM. OCI bundles more compute resources but at higher total cost.

A100 GPU Instances (40GB VRAM)

Oracle Cloud (VM.GPU.A100.1):

- Configuration: 1x A100 40GB, 15 vCPU, 240GB RAM

- On-demand: $2.95/hour ($2,154/month)

- Monthly commit: $2.655/hour ($1,939/month)

AWS (p4d.24xlarge):

- Configuration: 8x A100 40GB, 96 vCPU, 1,152GB RAM

- On-demand: $32.77/hour ($23,923/month)

- Per GPU: $4.10/hour ($2,990/month)

- 1-year reserved: $19.68/hour ($14,365/month total)

Azure (NC24ads_A100_v4):

- Configuration: 1x A100 80GB, 24 vCPU, 220GB RAM

- On-demand: $3.673/hour ($2,681/month)

- 1-year reserved: $2.394/hour ($1,748/month)

Winner: OCI - 20% cheaper than Azure, 28% cheaper per-GPU than AWS for single A100 instances.

Important: AWS doesn't offer single-A100 instances. You must rent 8x A100 minimum, making it expensive for small deployments but cost-effective at scale with reserved instances.

H100 GPU Instances (80GB VRAM)

Oracle Cloud (BM.GPU.H100.8):

- Configuration: 8x H100 80GB, 112 vCPU, 2TB RAM

- On-demand: $40.96/hour ($29,901/month)

- Per GPU: $5.12/hour ($3,738/month)

AWS (p5.48xlarge):

- Configuration: 8x H100 80GB, 192 vCPU, 2TB RAM

- On-demand: $98.32/hour ($71,774/month)

- Per GPU: $12.29/hour ($8,972/month)

- 1-year reserved: $57.08/hour ($41,668/month)

Azure (ND96isr_H100_v5):

- Configuration: 8x H100 80GB, 96 vCPU, 1.9TB RAM

- On-demand: $95.40/hour ($69,642/month)

- Per GPU: $11.93/hour ($8,705/month)

Winner: OCI - 58% cheaper than AWS, 57% cheaper than Azure for H100 instances.

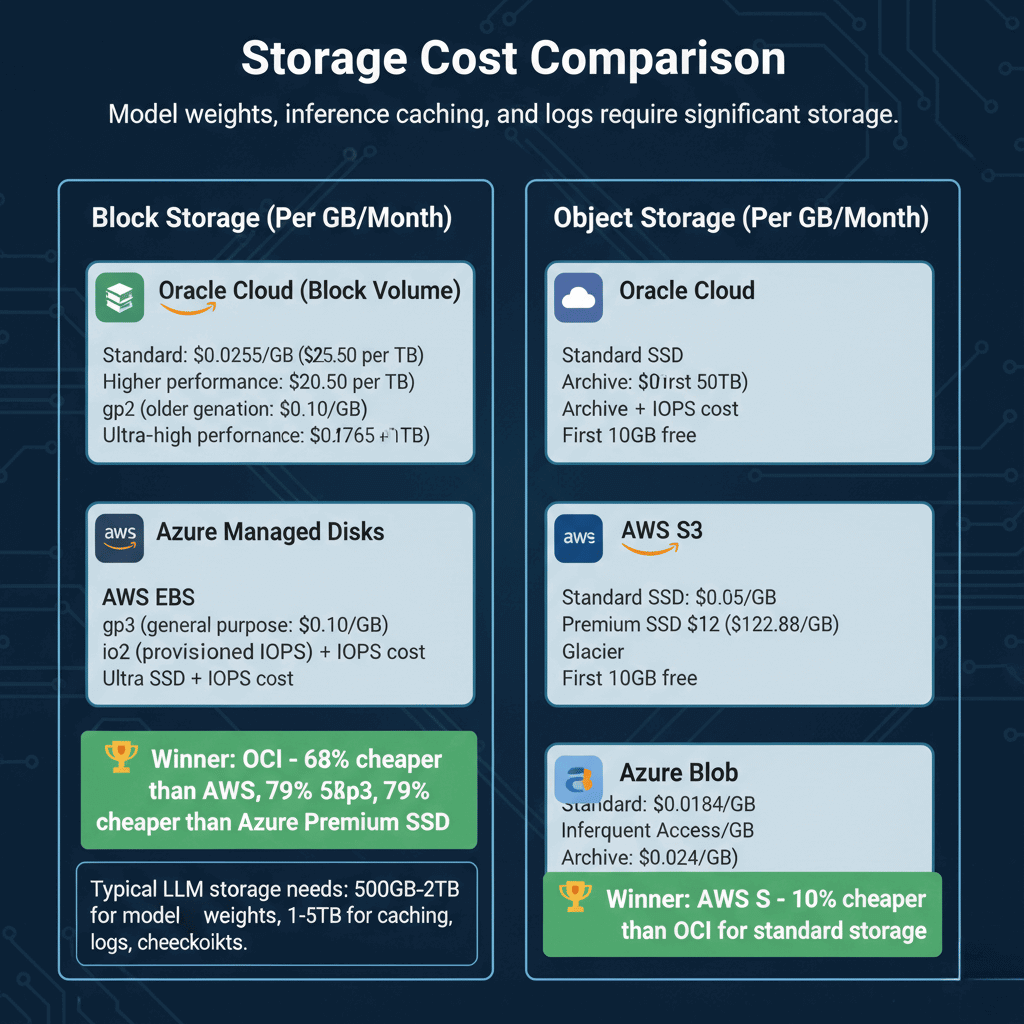

Storage Cost Comparison

Model weights, inference caching, and logs require significant storage.

Block Storage (Per GB/Month)

Oracle Cloud (Block Volume):

- Standard: $0.0255/GB ($25.50 per TB)

- Higher performance: $0.0425/GB

- Ultra-high performance: $0.0765/GB

AWS EBS:

- gp3 (general purpose): $0.08/GB ($80 per TB)

- gp2 (older generation): $0.10/GB

- io2 (provisioned IOPS): $0.125/GB + IOPS cost

Azure Managed Disks:

- Standard SSD: $0.05/GB

- Premium SSD: $0.12/GB ($122.88 per TB)

- Ultra SSD: $0.125/GB + IOPS cost

Winner: OCI - 68% cheaper than AWS gp3, 79% cheaper than Azure Premium SSD.

Typical LLM storage needs: 500GB-2TB for model weights, 1-5TB for caching, logs, checkpoints.

Object Storage (Per GB/Month)

Oracle Cloud:

- Standard: $0.0255/GB

- Archive: $0.0010/GB

- First 10GB free

AWS S3:

- Standard: $0.023/GB (first 50TB)

- Infrequent Access: $0.0125/GB

- Glacier: $0.004/GB

Azure Blob:

- Hot tier: $0.0184/GB

- Cool tier: $0.01/GB

- Archive: $0.002/GB

Winner: AWS S3 - 10% cheaper than OCI for standard storage.

Network and Data Transfer Costs

LLM inference generates significant network traffic from API requests and responses.

Data Egress Pricing (Per GB)

Oracle Cloud:

- First 10TB/month: FREE

- 10TB-50TB: $0.0085/GB

- 50TB+: Variable by region

AWS:

- First 100GB/month: Free

- Next 10TB: $0.09/GB

- 10TB-50TB: $0.085/GB

- 50TB-150TB: $0.07/GB

Azure:

- First 100GB/month: Free

- Next 10TB: $0.087/GB

- 10TB-50TB: $0.083/GB

- 50TB-150TB: $0.07/GB

Winner: OCI - Massively cheaper with 10TB free tier vs 100GB for AWS/Azure.

Example: Serving 100M tokens/day at 1 byte/token = 3TB/month egress.

- OCI: $0 (under 10TB free)

- AWS: $265

- Azure: $257

Ingress Pricing

All three clouds offer free data ingress. No charges for uploading models or sending requests.

Total Cost of Ownership Examples

Real-world deployment cost comparison.

Small Production Deployment (Llama 2 7B)

Configuration:

- 2x A10 GPU instances

- 500GB block storage for models

- 2TB object storage for logs

- 5TB monthly egress

Monthly Costs:

Oracle Cloud:

- 2x VM.GPU.A10.1: $2,190

- Block storage: $13

- Object storage: $51

- Egress: $0 (free tier)

- Total: $2,254/month

AWS:

- 2x g5.xlarge: $1,468

- EBS gp3: $40

- S3 standard: $46

- Egress: $441

- Total: $1,995/month

Azure:

- 2x NC6s_v3: $4,468

- Premium SSD: $61

- Blob hot: $37

- Egress: $426

- Total: $4,992/month

Winner: AWS - 13% cheaper than OCI, 60% cheaper than Azure.

Medium Production Deployment (Llama 2 70B)

Configuration:

- 4x A100 40GB instances

- 2TB block storage

- 5TB object storage

- 15TB monthly egress

Monthly Costs:

Oracle Cloud:

- 4x VM.GPU.A100.1: $8,616

- Block storage: $51

- Object storage: $128

- Egress: $43 (5TB over free tier)

- Total: $8,838/month

AWS:

- 0.5x p4d.24xlarge (4 of 8 GPUs): $11,962

- EBS: $160

- S3: $115

- Egress: $1,333

- Total: $13,570/month

Azure:

- 4x NC24ads_A100_v4: $10,724

- Premium SSD: $245

- Blob: $92

- Egress: $1,306

- Total: $12,367/month

Winner: OCI - 35% cheaper than AWS, 29% cheaper than Azure.

Note: AWS savings improve significantly with reserved instances (1-3 year commitment).

Large Production Deployment (Multiple 70B Models)

Configuration:

- 8x A100 instances (full bare metal server)

- 10TB block storage

- 20TB object storage

- 50TB monthly egress

Monthly Costs:

Oracle Cloud (BM.GPU.A100-v2.8):

- 1x bare metal: $17,232

- Block storage: $255

- Object storage: $510

- Egress: $340 (40TB over free tier)

- Total: $18,337/month

AWS (p4d.24xlarge):

- 1x instance: $23,923

- EBS: $800

- S3: $460

- Egress: $4,350

- Total: $29,533/month

Azure (NC96ads_A100_v4):

- 1x instance (8 GPUs): ~$21,000

- Premium SSD: $1,229

- Blob: $368

- Egress: $4,183

- Total: $26,780/month

Winner: OCI - 38% cheaper than AWS, 32% cheaper than Azure.

When to Choose Each Cloud Provider

Select cloud based on your specific requirements.

Choose Oracle Cloud When:

- Running large GPU deployments (4+ A100 or H100 GPUs)

- High data egress requirements (>10TB/month)

- Need maximum price-performance for compute

- Using Oracle Database or other OCI services

- Willing to commit to 1-month minimum contracts

Best for: Cost-sensitive large deployments, high-traffic API services.

Choose AWS When:

- Need single A10 GPU instances

- Want per-second billing flexibility

- Using AWS ecosystem (SageMaker, Bedrock, S3, Lambda)

- Require global presence (25+ regions)

- Can commit to reserved instances (40-60% savings)

Best for: Flexible small deployments, AWS-native architectures, global reach.

Choose Azure When:

- Integrated with Microsoft enterprise agreements

- Using Azure AI services, Azure ML

- Need hybrid cloud with on-premise integration

- Existing Microsoft partnerships and credits

Best for: Microsoft-centric organizations, hybrid deployments.

Hidden Costs to Consider

Beyond headline prices, factor in these costs.

Support Plans

- OCI: Free basic support, $500+/month for production

- AWS: Free basic, 10% of spend for business support

- Azure: Free basic, 10%+ for professional direct

Data Transfer Between Regions

All clouds charge for cross-region transfer:

- OCI: $0.01-0.02/GB

- AWS: $0.02/GB

- Azure: $0.02/GB

Load Balancers

- OCI: $0.01/hour + bandwidth

- AWS ALB: $0.0225/hour + LCU charges

- Azure: $0.025/hour + data processed

Reserved Instance Flexibility

- AWS: Most flexible, can sell on marketplace

- Azure: Can exchange, limited refunds

- OCI: No marketplace, exchange possible

Cost Optimization Strategies

Reduce cloud costs regardless of provider.

Use Reserved Instances

Commit 1-3 years for 40-60% savings:

- AWS: 40% (1-year), 60% (3-year)

- Azure: 35% (1-year), 55% (3-year)

- OCI: 20% (monthly commit), 40% (annual)

Leverage Free Tiers

- OCI: 10TB egress/month saves $900/month on AWS

- AWS: 100GB egress, free CloudFront (in some cases)

- Azure: 100GB egress, free services up to limits

Right-Size Instances

Don't overprovision:

- Monitor GPU utilization (target 70-85%)

- Use smaller instances during off-peak

- Auto-scale based on demand

Optimize Storage

- Archive old models to cheap tiers

- Use lifecycle policies for automatic tiering

- Clean up unused snapshots and logs

Conclusion

Cloud provider selection significantly impacts LLM deployment costs and operational efficiency. Oracle Cloud Infrastructure delivers 20-58% cost savings on A100 and H100 GPU instances compared to AWS and Azure, making it optimal for large production deployments with 4+ GPUs. OCI's 10TB free monthly egress eliminates data transfer costs that reach $900 monthly on competing clouds. AWS provides best value for A10-based small deployments and offers unmatched ecosystem integration with SageMaker and Bedrock. Azure fits Microsoft-centric organizations with enterprise agreements and hybrid cloud requirements. Beyond headline instance pricing, account for support costs, data transfer fees, load balancers, and reserved instance flexibility. Optimize spending through reserved instances offering 35-60% savings, aggressive use of free tiers, right-sized instances matching actual utilization, and storage lifecycle policies. Choose your platform based on specific workload characteristics and ecosystem requirements rather than instance prices alone.

Frequently Asked Questions

Should I use spot instances or preemptible VMs for LLM inference?

Avoid spot/preemptible instances for production LLM inference. While they offer 60-90% discounts, they can be terminated with little notice (30-120 seconds), disrupting active inference requests and causing poor user experience. Spot instances work for batch processing, model training, or development environments where interruptions are acceptable. For production inference, use on-demand or reserved instances to guarantee availability. If cost is critical, consider reserved instances (40-60% savings) or OCI's monthly flex pricing instead of risking service disruptions with spot instances.

How do cloud costs compare to on-premise deployment?

On-premise becomes cost-effective at scale with stable workloads. An A100 GPU costs $10,000-15,000. Cloud costs $2,000-3,000/month. Breakeven occurs at 3-7 months. However, on-premise requires upfront capital, data center space, power, cooling, and IT staff. Cloud offers flexibility, global reach, and no maintenance burden. Choose on-premise if you operate 10+ GPUs 24/7, have existing data center infrastructure, and in-house expertise. Choose cloud for variable workloads, global deployment, or if you lack capital for upfront hardware investment. Hybrid approaches work well - use on-premise for baseline load, cloud for burst capacity.

Can I mix cloud providers for better pricing?

Yes, multi-cloud strategies can optimize costs. Deploy latency-sensitive inference on OCI for price-performance, use AWS S3 for cost-effective model storage, leverage Azure for Microsoft enterprise integration. However, multi-cloud adds complexity: inter-cloud data transfer costs $0.02-0.05/GB, you need multiple management tools, and staff must learn multiple platforms. The operational overhead typically only justifies multi-cloud for large organizations (100+ GPUs) or when specific services require different clouds. For most teams, optimize within a single cloud through reserved instances, auto-scaling, and storage tiering before adding multi-cloud complexity.