CI/CD for Performance Optimization

Integrate performance into CI/CD pipelines. Learn automated performance testing, build optimization, deployment strategies, and performance budgets to catch regressions before production.

TL;DR

- Shift performance left into CI/CD: Automate benchmark tests, load tests, and response time assertions in every pipeline run. Fail builds when critical paths degrade or SLO thresholds are breached.

- Optimize builds for speed: Use tree shaking, code splitting, image optimization, and multi-stage container builds to minimize artifact sizes. Smaller builds mean faster deployments and better cold start performance.

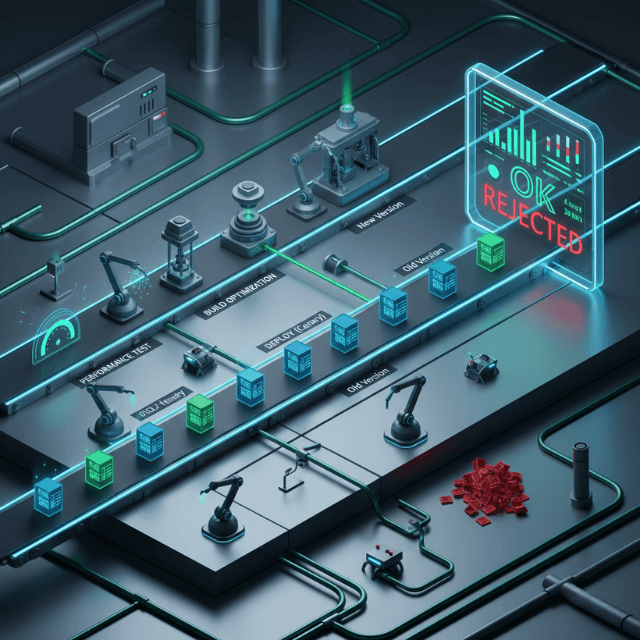

- Deploy with performance in mind: Implement canary releases, blue-green deployments, and feature flags to limit blast radius. Monitor metrics during rollout and auto-rollback if error rates or latencies exceed thresholds.

- Enforce performance budgets: Set limits on bundle size, API response size, and third-party script impact. CI gates automatically enforce budgets, preventing performance debt from accumulating.

- Don't forget pipeline performance: Cache dependencies, run tests in parallel, and use incremental builds. A fast pipeline keeps developer velocity high while maintaining performance checks.

Continuous Integration and Continuous Deployment pipelines do more than ship code faster. When designed intentionally, CI/CD becomes a performance optimization tool. Automated performance tests catch regressions before production.

Build optimizations reduce artifact sizes. Deployment strategies minimize user-facing impact. Integrating performance into every stage of delivery creates faster, more reliable applications.

Performance Testing in CI Pipelines

Automated performance tests catch regressions before they reach production. Unit tests verify correctness. Performance tests verify speed.

Benchmark tests measure specific function performance. Run benchmarks on every commit to detect slowdowns. Fail builds when critical paths degrade.

# GitHub Actions performance testing

performance-test:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Run benchmarks

run: |

npm run benchmark -- --json > results.json

- name: Compare with baseline

run: |

node scripts/compare-benchmarks.js results.json baseline.json

Load tests verify system behavior under stress. Run scaled-down load tests in CI for critical paths. Full load tests can run nightly or before releases.

Response time assertions fail builds when endpoints slow down. Set acceptable thresholds based on SLOs. Alert teams before slowdowns reach users.

// k6 load test with thresholds

import http from 'k6/http';

import { check } from 'k6';

export const options = {

thresholds: {

http_req_duration: ['p95<200'], // 95th percentile under 200ms

http_req_failed: ['rate<0.01'], // Less than 1% failures

},

};

export default function () {

const res = http.get('http://localhost:3000/api/orders');

check(res, { 'status is 200': (r) => r.status === 200 });

}

Database migration performance testing prevents slow deployments. Test migrations against production-sized datasets. Catch migrations that would lock tables for extended periods.

Memory and CPU profiling in tests identifies resource issues. Profile critical paths during test runs. Flag tests that consume excessive resources.

Build Optimization Techniques

Smaller builds deploy faster and use less bandwidth. Every megabyte matters for cold starts and container pulls.

Tree shaking removes unused code. Modern bundlers eliminate dead code automatically. Verify tree shaking works by checking bundle sizes.

// webpack-bundle-analyzer reveals what's included

const BundleAnalyzerPlugin = require('webpack-bundle-analyzer').BundleAnalyzerPlugin;

module.exports = {

plugins: [

new BundleAnalyzerPlugin({

analyzerMode: 'static',

reportFilename: 'bundle-report.html'

})

]

};

Dependency audit identifies bloated packages. Some packages pull massive transitive dependencies. Alternatives may provide the same functionality with smaller footprint.

Code splitting separates rarely-used code. Load code on demand rather than upfront. Reduces initial bundle size and time-to-interactive.

Image optimization happens at build time. Compress images, generate responsive variants, and convert to modern formats (WebP, AVIF).

# Optimize images during build

- name: Optimize images

run: |

npx sharp-cli --input "src/images/*.{jpg,png}" \

--output "dist/images" \

--format webp \

--quality 80

Container image optimization reduces deployment time. Multi-stage builds minimize final image size. Base image selection affects pull times.

# Multi-stage build for minimal image

FROM node:20-alpine AS build

WORKDIR /app

COPY package*.json ./

RUN npm ci --only=production

FROM node:20-alpine

WORKDIR /app

COPY --from=build /app/node_modules ./node_modules

COPY dist ./dist

CMD ["node", "dist/server.js"]

Deployment Strategies for Performance

Blue-green deployments enable instant rollback. Two identical environments alternate between production and staging. Switch traffic instantly if problems emerge.

Canary deployments limit blast radius. Route small traffic percentages to new versions. Monitor performance before expanding rollout.

# Kubernetes canary deployment

apiVersion: argoproj.io/v1alpha1

kind: Rollout

spec:

strategy:

canary:

steps:

- setWeight: 5

- pause: {duration: 10m}

- setWeight: 20

- pause: {duration: 10m}

- setWeight: 50

- pause: {duration: 10m}

Rolling updates minimize resource spikes. Update instances gradually rather than simultaneously. Prevents overwhelming databases during deployment.

Warm-up periods prepare new instances. Hit health endpoints repeatedly before routing traffic. Ensure caches are warm and connections are established.

Feature flags separate deployment from release. Deploy code without activating features. Enable gradually and monitor performance impact.

from feature_flags import is_enabled

def process_order(order):

if is_enabled('new_order_processing', user=order.user):

return new_process_order(order) # Monitor performance

return legacy_process_order(order)

Zero-downtime database migrations require careful planning. Use expand-contract pattern. Add new columns, migrate data, then remove old columns in separate deployments.

Monitoring Production Deployments

Deployment monitoring detects problems immediately. Compare metrics before and after deployment. Roll back automatically if thresholds breach.

Real User Monitoring (RUM) shows actual user experience. Lab tests don't capture real-world conditions. Monitor Core Web Vitals during rollouts.

Error rate tracking catches deployment issues. Sudden error spikes indicate problems. Automated alerting notifies teams instantly.

# Datadog deployment tracking

from datadog import statsd

def track_deployment(version):

statsd.event(

title=f'Deployment: {version}',

text=f'New version deployed',

alert_type='info',

tags=[f'version:{version}']

)

Latency percentile comparison reveals degradation. Compare p50, p95, and p99 latencies before and after. Small average changes can hide significant tail latency increases.

Resource utilization tracking identifies efficiency changes. Memory usage, CPU utilization, and database connections should remain stable or improve after deployments.

Automated rollback triggers on metric thresholds. Configure automatic rollback when error rates or latencies exceed limits. Fast rollback minimizes user impact.

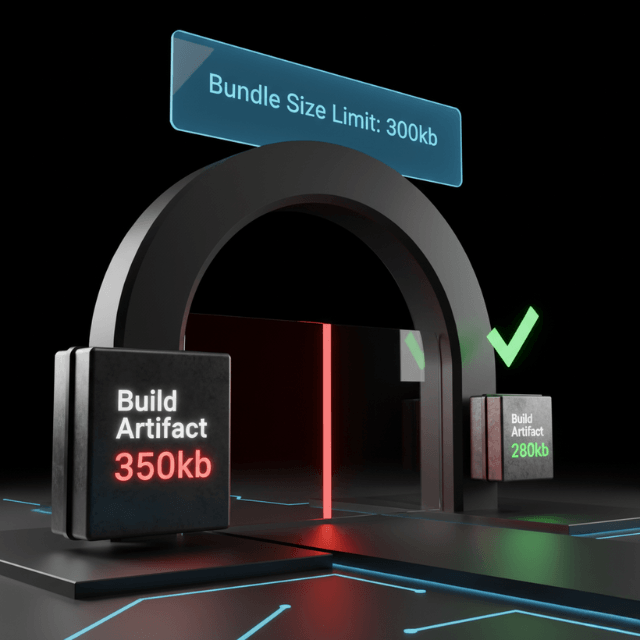

Performance Budgets and Gates

Performance budgets define acceptable limits. Bundle size budgets prevent creeping bloat. Response time budgets maintain user experience.

CI gates enforce budgets automatically. Fail builds that exceed bundle size limits. Block deployments that fail performance tests.

# Lighthouse CI performance budget

ci:

collect:

url:

- http://localhost:3000/

assert:

assertions:

performance-budget:

- warn

- scores:

performance: 90

sizeLimit:

total: 300

script: 150

stylesheet: 30

Bundle size tracking over time reveals trends. Small increases compound. Track sizes in CI and alert on growth.

Third-party script budgets limit external impact. Third-party scripts often cause performance problems. Budget their size and execution time.

API response size budgets prevent payload bloat. Large responses slow mobile users. Monitor and limit response sizes.

// Express middleware to track response size

app.use((req, res, next) => {

const originalSend = res.send;

res.send = function(body) {

const size = Buffer.byteLength(body);

if (size > 100000) {

console.warn(`Large response: ${req.path} - ${size} bytes`);

}

return originalSend.call(this, body);

};

next();

});

Infrastructure as Code Performance

Terraform and similar tools manage infrastructure as code. Performance considerations apply to infrastructure changes too.

Resource sizing automation prevents over-provisioning. Right-size instances based on metrics. Automate scaling policies in code.

# Terraform auto-scaling configuration

resource "aws_autoscaling_policy" "scale_up" {

name = "scale-up"

scaling_adjustment = 2

adjustment_type = "ChangeInCapacity"

cooldown = 300

autoscaling_group_name = aws_autoscaling_group.app.name

}

resource "aws_cloudwatch_metric_alarm" "high_cpu" {

alarm_name = "high-cpu"

comparison_operator = "GreaterThanThreshold"

evaluation_periods = 2

metric_name = "CPUUtilization"

threshold = 70

alarm_actions = [aws_autoscaling_policy.scale_up.arn]

}

Network configuration affects latency. Availability zone selection, VPC peering, and subnet configuration impact performance.

Database configuration optimization in code ensures consistency. Connection limits, cache sizes, and parameter tuning version-controlled and applied automatically.

CDN and caching configuration as code. Cache policies, TTLs, and invalidation rules managed alongside application code.

Pipeline Performance Itself

Slow pipelines slow development. Pipeline optimization directly affects team productivity.

Caching dependencies reduces build time. Cache npm, pip, or maven dependencies between builds. Avoid downloading the same packages repeatedly.

# GitHub Actions dependency caching

- name: Cache node modules

uses: actions/cache@v3

with:

path: ~/.npm

key: ${{ runner.os }}-node-${{ hashFiles('**/package-lock.json') }}

Parallel test execution reduces total time. Split tests across multiple runners. Run independent test suites simultaneously.

Incremental builds skip unchanged components. Build systems should detect what changed. Rebuild only affected modules.

Pipeline stages run in parallel where possible. Lint, test, and build can often run simultaneously. Sequential stages add unnecessary delay.

Self-hosted runners provide consistent performance. Cloud runner availability and performance varies. Self-hosted runners offer predictable, often faster, execution.

Pipeline metrics identify bottlenecks. Track stage durations over time. Optimize the slowest stages first.

Conclusion

CI/CD pipelines are no longer just about shipping features—they're your first line of defense against performance regression. By embedding performance testing, build optimization, and deployment safeguards directly into your delivery workflow, you transform performance from a reactive firefight into a proactive, automated practice.

The key is making performance checks as non-negotiable as unit tests: fail builds that breach budgets, block deployments that degrade metrics, and monitor every rollout for anomalies. When performance becomes part of your definition of done—enforced automatically, not manually reviewed—you ship faster and more reliably. The result? Users experience consistent speed, and teams spend less time firefighting production slowdowns.

FAQs

How do I performance test in CI without slowing down the pipeline too much?

Use a tiered approach: run lightweight benchmark tests on every commit (seconds), scaled-down load tests on pull requests (minutes), and full-scale load tests nightly or pre-release (longer).

Cache dependencies and test results where possible. Parallelize test execution across multiple runners. The goal is to catch regressions early without blocking developer velocity—slow full tests can run out-of-band.

What metrics should trigger an automatic rollback during deployment?

Focus on user-impacting metrics: error rate (HTTP 5xx), p95/p99 latency, and business-critical success rates (e.g., checkout completion).

Set thresholds based on your SLOs—for example, "roll back if error rate > 1% for 2 minutes" or "if p95 latency exceeds 500ms for 5 minutes". Combine with canary analysis tools (Argo Rollouts, Flagger) that compare canary vs. baseline metrics statistically before deciding.

How do I set realistic performance budgets for a new project?

Start with industry benchmarks (Core Web Vitals: LCP < 2.5s, FID < 100ms) and adjust based on your actual user base and device mix. For bundle budgets, analyze competitors or similar apps.

Use real user monitoring (RUM) data once available to refine budgets. The key is to set some budget initially (even if generous) to establish a baseline, then tighten over time. Budgets without enforcement are just wishes—make CI fail on breach.

Summarize this post with:

Ready to put this into production?

Our engineers have deployed these architectures across 100+ client engagements — from AWS migrations to Kubernetes clusters to AI infrastructure. We turn complex cloud challenges into measurable outcomes.