Optimizing Cloud-Native Infrastructure Costs

This guide covers the FinOps framework, Kubernetes cost strategies, and practical optimization techniques that reduce spending by 35-60% while maintaining performance.

Introduction

Organizations waste an average of 27% of cloud spending on idle resources, overprovisioned instances, and inefficient configurations. Cloud native FinOps brings financial accountability to container environments, Kubernetes clusters, and microservices architectures. This guide covers the FinOps framework, Kubernetes cost strategies, and practical optimization techniques that reduce spending by 35-60% while maintaining performance.

The FinOps Framework

FinOps defines three iterative phases creating a continuous improvement cycle: Inform, Optimize, and Operate.

Inform Phase establishes cost visibility through data collection, allocation, and reporting. Implement tagging strategies categorizing resources by application, environment, and cost center. Deploy cost monitoring tools collecting data from all cloud providers into unified dashboards. Establish showback or chargeback mechanisms attributing costs to responsible teams, creating accountability.

Develop forecasting capabilities analyzing historical trends to predict future spending. Establish KPIs like cost per customer, cost per transaction, or cost per deployment providing business context to raw spending. Anomaly detection systems alert teams to unexpected cost spikes enabling proactive responses.

Optimize Phase leverages insights to identify and implement cost reductions. Balance competing priorities, reducing costs while maintaining performance, security, and reliability. Common strategies include rightsizing resources to match actual utilization, eliminating idle resources consuming costs without delivering value, and leveraging commitment-based discounts like reserved instances.

Implement autoscaling policies adjusting capacity based on demand, preventing overprovisioning. Storage optimization tiers data based on access patterns, deletes obsolete snapshots, and consolidates redundant volumes. Application optimization might include refactoring inefficient code, implementing connection pooling, or adopting serverless architectures.

Operate Phase transforms FinOps from projects into ongoing cultural practices embedded in daily operations. Implement cloud governance policies preventing costly mistakes before they occur: requiring tags on all resources, blocking deployment of oversized instances, and enforcing budget limits.

Automation plays a critical role. Deploy Infrastructure as Code templates with cost-optimized defaults, scheduled shutdowns for non-production environments, and automated rightsizing recommendations. Integrate cost feedback into CI/CD pipelines, enabling developers to see cost implications before deploying changes.

Kubernetes Cost Optimization

Kubernetes complexity creates significant cost challenges. Organizations waste 35-40% of Kubernetes spending on idle resources, oversized clusters, and inefficient configurations.

Resource requests and limits require careful configuration. Requests reserve capacity for pod scheduling. Limits constrain resource consumption. Setting requests too high wastes capacity. Setting too low causes scheduling problems and performance issues. Analyze actual utilization patterns using tools like Vertical Pod Autoscaler to set appropriate values based on historical usage.

Cluster autoscaling automatically adjusts node pool sizes based on pod scheduling requirements. When pending pods cannot be scheduled due to insufficient capacity, the cluster autoscaler provisions additional nodes. When nodes run with low utilization, the autoscaler drains and removes them, reducing costs.

Combine cluster autoscaling with Horizontal Pod Autoscaler for optimal results. HPA adjusts pod replica counts based on metrics like CPU utilization or custom business metrics. As HPA creates more pods, cluster autoscaling provisions nodes. As HPA scales down, cluster autoscaling removes unnecessary nodes.

Node pool optimization impacts costs significantly. Create multiple node pools optimized for different workload types: compute-optimized instances for CPU-intensive workloads, memory-optimized for data processing, and spot instances for batch processing. Mixing spot and on-demand instances reduces costs while maintaining reliability. Many organizations achieve 50-70% cost reduction on fault-tolerant workloads using spot instances.

Storage and network optimization addresses significant cost components. Match storage tiers to performance requirements: premium SSD for databases requiring low latency, standard SSD for most applications, HDD-based storage for archival data. Implement lifecycle policies automatically tiering or deleting old snapshots. Audit and clean up orphaned persistent volumes continuing to accrue costs after pods are deleted.

Network costs primarily stem from data transfer charges. Traffic between pods in the same availability zone typically incurs no charges, but cross-zone or cross-region traffic is expensive. Place frequently communicating services in the same zone when possible. Use service meshes to optimize routing and reduce unnecessary hops.

Practical Cost Optimization Techniques

Rightsizing matches allocated resources to actual utilization, eliminating overprovisioned infrastructure waste. Effective rightsizing requires analyzing resource utilization over representative periods. Peak utilization during normal operations should consume 60-80% of allocated capacity, providing headroom for spikes while avoiding waste. Resources consistently running below 40% utilization are candidates for downsizing.

Rightsizing is an ongoing process. Application usage patterns change, new features alter requirements, and traffic grows over time. Review resource utilization quarterly, implementing automated recommendations from cloud provider tools. Start with non-production environments where risks are lower, validate changes don't impact performance, then carefully apply learnings to production.

Eliminating idle resources prevents waste from unused infrastructure. Common culprits include orphaned load balancers from deleted applications, test databases forgotten after projects complete, stopped instances continuing to incur storage charges, and unattached volumes persisting indefinitely. Organizations routinely discover idle resources consuming 15-30% of their cloud budget.

Implement aggressive resource lifecycle policies. Require expiration tags on all non-production resources. Deploy automated systems deleting resources exceeding expiration dates. Tag production resources with ownership information enabling teams to identify obsolete infrastructure. Conduct monthly audits identifying resources with no recent activity.

Leveraging commitment discounts provides substantial savings of 30-70% in exchange for commitments to use specific resources over one or three-year terms. Reserved Instances, Savings Plans, and Committed Use Discounts reduce costs for steady-state workloads with predictable requirements.

Commitment planning requires analyzing usage patterns to identify stable baseline capacity. Start conservatively, committing only to capacity you're confident will be utilized continuously. Layer commitments strategically: use long-term agreements for predictable baseline, medium-term commitments for growing workloads, and on-demand or spot instances for variable demand.

Autoscaling policies adjust resource allocation dynamically based on demand, eliminating overprovisioning during low-traffic periods while maintaining performance during peaks. Effective autoscaling reduces costs by 40-60% for workloads with variable traffic patterns.

Horizontal autoscaling adds or removes instances based on metrics like CPU utilization, request count, or custom business metrics. Configure scaling policies conservatively to prevent thrashing. Implement warm-up periods allowing new instances to initialize fully before receiving traffic. Set appropriate minimum and maximum limits preventing excessive scaling.

Building a Cost-Conscious Culture

Technology alone cannot optimize cloud costs sustainably. Cultural changes where teams understand costs, take ownership of spending, and continuously seek efficiency improvements are essential.

Cross-functional collaboration breaks down traditional silos between engineering, finance, and product teams. Establish a FinOps team or Cloud Center of Excellence coordinating optimization efforts. Include representatives from all stakeholder groups. This team develops cost optimization policies, provides training, shares best practices, and arbitrates tradeoff decisions.

Regular cost review meetings bring stakeholders together to analyze spending trends, identify anomalies, and prioritize optimization initiatives. Monthly reviews provide enough frequency to catch issues early while allowing time for implementing improvements. Share cost dashboards widely, ensuring every team can see their spending.

Engineering accountability empowers teams making architectural decisions with lasting cost implications. Provide engineers with real-time cost feedback integrated into workflows. Display cost estimates in infrastructure-as-code pull requests. Include cost metrics in application monitoring dashboards. Implement cost budgets for teams or applications with alerts when spending exceeds thresholds.

Incentivize cost-conscious behavior without creating perverse incentives. Celebrate teams achieving cost reductions while maintaining quality. Include cost efficiency in architecture review criteria. Provide training on cloud cost models and optimization techniques.

Product and business alignment ensures optimization considers business impact. Calculate unit economics connecting cloud costs to business metrics like cost per active user, cost per transaction, or cost per feature. These unit economics enable informed decisions about whether costs are appropriate.

Involve product managers in cost discussions. When evaluating new features, consider infrastructure costs alongside development effort. When making architectural decisions, include business stakeholders who can weigh cost-performance tradeoffs against customer value.

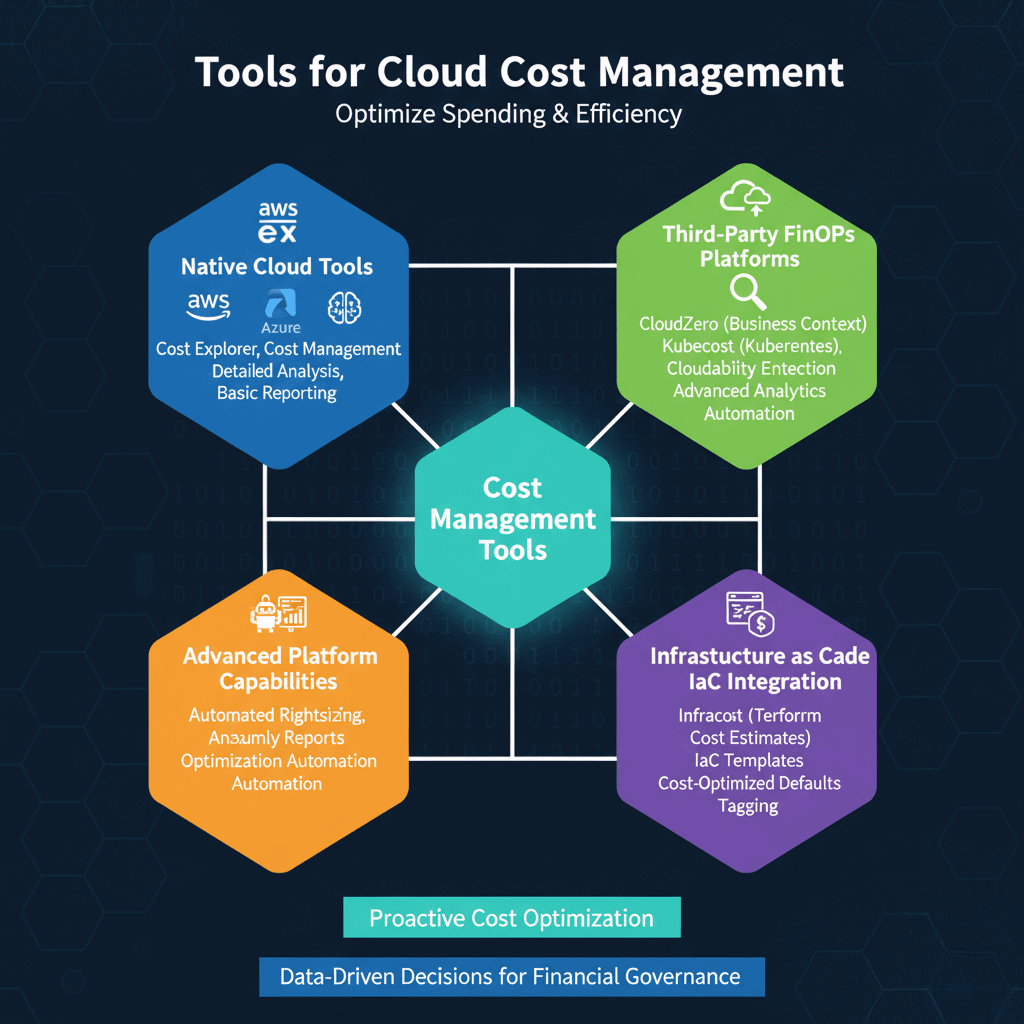

Tools for Cost Management

Cloud provider native tools provide foundational cost management. AWS Cost Explorer, Azure Cost Management, and Google Cloud Cost Management offer detailed spending analysis with filtering by service, tag, or linked account. Native tools excel at raw data access and basic analysis, integrating seamlessly with cloud services without additional procurement.

Third-party FinOps platforms extend native capabilities with unified multi-cloud visibility, advanced analytics, and automation. CloudZero provides cost intelligence with business context. Kubecost specializes in Kubernetes cost visibility. Cloudability offers enterprise-scale cost management with sophisticated allocation and showback capabilities.

These platforms typically offer automated rightsizing recommendations, anomaly detection with machine learning, custom reporting and dashboards, and APIs enabling integration with existing tools. Advanced platforms provide optimization automation, automatically implementing approved optimizations.

Infrastructure as Code integration enables incorporating cost optimization into infrastructure provisioning. Cost estimation tools like Infracost analyze Terraform plans, showing cost implications of proposed changes in pull requests. This shift-left approach prevents costly mistakes, making cost optimization proactive rather than reactive.

Establish IaC templates with cost-optimized defaults. Create instance type selections favoring cost-efficient options. Include autoscaling configurations by default. Implement tagging requirements ensuring all resources support cost allocation.

Measuring Success

Track both financial metrics showing cost efficiency and operational metrics indicating program maturity.

Financial metrics include unit economics connecting spending to business metrics like cost per active user or cost per transaction. Cost avoidance quantifies savings from optimization initiatives. Waste metrics identify inefficiency: track spending on idle resources, unattached volumes, and oversized instances. Leading organizations achieve waste percentages below 10%.

Operational metrics include cost allocation coverage measuring the percentage of spending attributed to specific teams or applications. Target 90%+ allocation coverage. Forecast accuracy compares predicted spending to actual costs. Organizations with mature FinOps achieve forecast accuracy within 5-10% variance.

Coverage ratios for commitment-based discounts show the percentage of eligible spending covered by reserved instances or savings plans. Higher coverage indicates effective commitment planning, balanced against flexibility to avoid over-commitment.

Conclusion

Cloud native cost optimization is critical for organizations leveraging modern cloud architectures. The FinOps framework provides structured approaches through Inform, Optimize, and Operate phases. Organizations systematically building capabilities start with basic cost tracking and advance to sophisticated automation and predictive optimization.

Successful cost optimization extends beyond technology to culture and collaboration. Breaking down silos between engineering, finance, and product teams creates shared accountability. Empowering engineers with cost visibility while educating finance teams on technical drivers builds cross-functional understanding necessary for effective optimization.

The journey requires patience and persistence, but results justify investment. Organizations with mature FinOps practices make informed decisions about where to invest and how to balance competing priorities. They transform cloud costs from operational burdens into strategic levers for competitive advantage through reduced waste, optimized resource utilization, and alignment between technology spending and business value.