Horizontal vs Vertical Scaling for SaaS Applications

Learn when to scale vertically (up) vs horizontally (out) for SaaS applications. Compare costs, architecture requirements, and hybrid approaches for sustainable growth.

TL;DR

- Vertical scaling (scaling up) adds more power to existing servers more CPU, memory, faster storage. Simple to implement, requires minimal application changes, but has hard limits (maximum instance size) and creates single points of failure. Best for databases, legacy apps, and development environments.

- Horizontal scaling (scaling out) adds more servers to share the load. Theoretically unlimited capacity, enables high availability, and supports elastic auto-scaling (pay only for what you use). Requires stateless application design, load balancers, and distributed storage. Higher operational complexity.

- Statelessness is the key enabler: To scale horizontally, your application must not store session data locally. Move state to shared storage (Redis, database) so any server can handle any request. Without this, horizontal scaling becomes impossible or unsafe.

- Cost dynamics differ: Vertical scaling costs escalate disproportionately doubling CPU often triples price. Horizontal scaling with auto-scaling matches costs to demand: pay for capacity only during peak traffic. Reserved instances favor predictable baseline capacity.

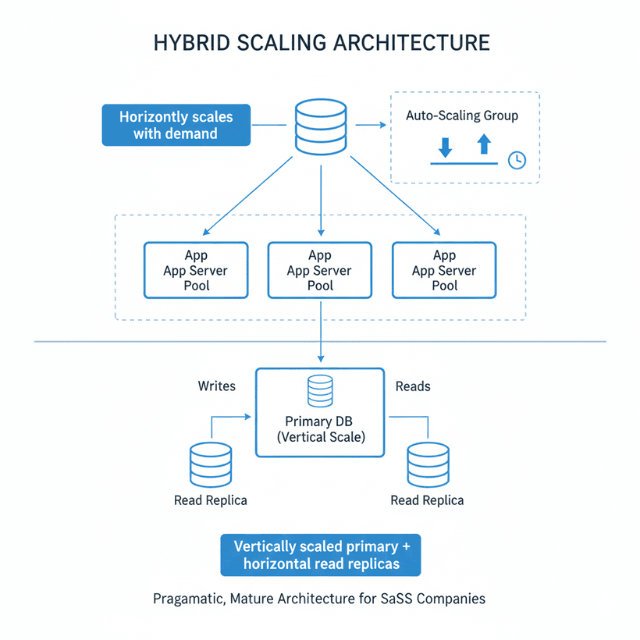

- Most production architectures are hybrid: Stateless application tiers (web servers, APIs) scale horizontally. Databases typically scale vertically first, then add read replicas, and finally shard for horizontal write scaling. Caches cluster horizontally; background workers scale horizontally.

- Build for horizontal scaling architecturally, deploy vertically initially. Over-engineering for scale you don't need wastes resources, but designing stateless services from the start costs little extra and preserves future optionality.

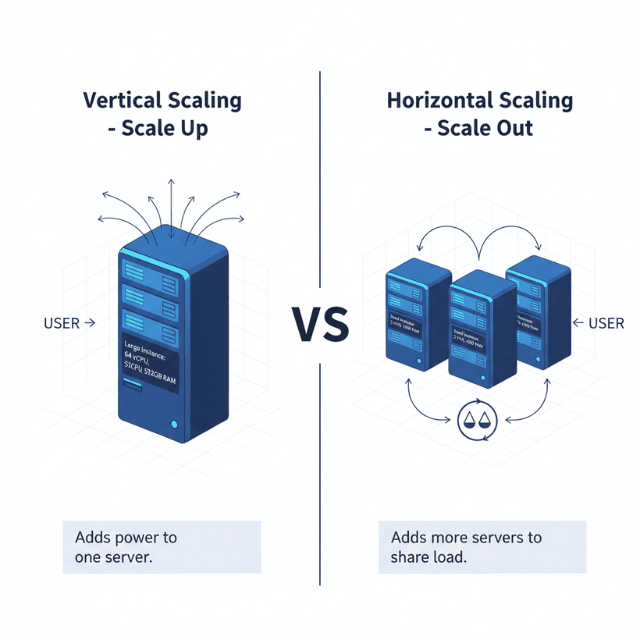

As your SaaS application grows, you face a fundamental question: scale up or scale out? Vertical scaling adds more power to existing servers. Horizontal scaling adds more servers to share the load. Each approach has distinct characteristics, costs, and trade-offs. Understanding these differences enables informed architecture decisions.

Understanding Scaling Approaches

Vertical scaling (scaling up) increases the resources of individual servers. Adding more CPU cores, memory, or faster storage makes each server more powerful. The application runs on fewer, larger machines.

Horizontal scaling (scaling out) distributes workload across multiple servers. Instead of one powerful server, many smaller servers share the work. Load balancers distribute traffic across the server pool.

Both approaches increase capacity, but they do so differently. Vertical scaling is often simpler to implement but has natural limits. Horizontal scaling can grow further but requires application architecture to support distribution.

The choice affects more than just infrastructure. It influences application design, operational practices, failure modes, and costs. Making the right choice early prevents expensive refactoring later.

Most modern SaaS applications benefit from horizontal scaling capability, even if initial deployments use few instances. Building for horizontal scaling from the start costs little extra but provides significant optionality.

Neither approach is universally superior. Your specific application, traffic patterns, and operational capabilities determine the best fit. Understanding both enables choosing appropriately.

When Vertical Scaling Makes Sense

Vertical scaling requires minimal application changes. Deploying on larger servers doesn't require code modifications. For applications not designed for distribution, vertical scaling provides immediate capacity.

Single-instance databases often scale vertically. Traditional relational databases like PostgreSQL and MySQL can utilize additional resources effectively. Sharding requires significant application changes.

Development and staging environments typically use vertical scaling. The operational simplicity of single servers suits non-production workloads. There's little benefit to complex distributed staging environments.

Applications with tight coupling between components may require vertical scaling. Processes that share significant state in memory can't easily distribute across servers. Refactoring for distribution takes time.

Legacy applications often scale vertically by necessity. Older architectures may assume single-server deployment. Modernizing for horizontal scaling requires investment that may not be justified.

Vertical scaling works until it doesn't. Eventually, you reach the largest available server size. At that point, horizontal scaling becomes necessary. Planning for this transition, even if distant, is prudent.

Vertical scaling has natural limits. The largest cloud instances provide impressive resources, but they're finite. No single server, however powerful, can match a properly distributed cluster's capacity.

When Horizontal Scaling Excels

Horizontal scaling enables theoretically unlimited capacity. Need to handle 10x traffic? Add 10x servers. The ceiling is budget and architecture, not hardware availability.

Stateless application tiers scale horizontally naturally. Web servers processing independent requests can distribute across many instances. Any server can handle any request.

High availability requires horizontal scaling. Redundancy across multiple instances protects against single-server failures. Auto-healing can replace failed instances automatically.

Cloud-native design assumes horizontal scaling. Kubernetes, auto-scaling groups, and similar technologies optimize for adding and removing instances dynamically.

Traffic variability favors horizontal scaling. Auto-scaling adjusts capacity to current demand. Peak traffic adds instances; quiet periods remove them. This elasticity reduces costs during low-demand periods.

Geographic distribution requires horizontal scaling. Serving users globally from multiple regions isn't possible with single servers. Horizontal approaches enable regional deployment.

Rolling deployments become possible with horizontal scaling. Updating servers one at a time enables zero-downtime deployments. New code rolls out progressively.

Architectural Requirements for Horizontal Scaling

Stateless design is the foundation. Servers should not store session data locally. Any request should be serviceable by any server. Move state to shared storage like databases or caches.

# Stateful (prevents horizontal scaling)

user_sessions = {} # Local server memory

@app.route('/api/session')

def get_session():

return user_sessions.get(current_user_id)

# Stateless (enables horizontal scaling)

@app.route('/api/session')

def get_session():

return redis_client.get(f"session:{current_user_id}")

Load balancers distribute traffic. Without proper load distribution, some servers overload while others idle. Load balancer configuration affects how evenly work distributes.

Health checks enable automatic failover. Load balancers and orchestrators must detect failed instances and route around them. Applications need health check endpoints.

Shared storage for persistent data. Files uploaded to one server must be accessible from others. Object storage like S3 provides shared file access.

Configuration management becomes more complex. Changes must propagate to all instances. Environment variables, configuration services, or container orchestration manage this distribution.

Deployment automation is essential. Manually updating dozens of servers isn't practical. CI/CD pipelines and orchestration tools automate deployment across the fleet.

Cost Considerations

Vertical scaling costs can escalate dramatically. Larger instances cost more than proportionally to their resources. Doubling CPU might triple the price.

Horizontal scaling amortizes fixed costs. Many smaller instances may cost less than fewer larger instances for equivalent total resources.

Reserved instances favor predictable baseline capacity. For the portion of workload that's consistent, reserved pricing reduces costs significantly.

Auto-scaling matches costs to demand. Only pay for capacity when you need it. Traffic-based scaling reduces off-peak spending.

Operational costs differ. Horizontal scaling requires more operational sophistication. Load balancers, health checks, and distributed deployments add complexity.

Licensing may favor vertical scaling. Some software licenses are per-instance. Fewer larger instances may cost less in licensing than many smaller ones.

Monitoring and logging costs scale with instances. More servers mean more metrics and logs. Factor in observability costs when comparing approaches.

Hybrid Approaches

Most production architectures combine both approaches. Different components scale differently based on their characteristics.

Application tiers typically scale horizontally. Web servers and API services handle independent requests. Adding instances is straightforward.

Databases often start with vertical scaling. The primary database scales up initially. Read replicas add horizontal read capacity. Sharding adds horizontal write capacity later.

Cache layers scale horizontally with clustering. Redis Cluster and similar technologies distribute cache data across nodes.

Background workers scale horizontally. Job processing distributes across worker pools. Adding workers increases processing capacity.

Regional deployment adds geographic horizontal scaling. Deploying application stacks in multiple regions serves global users. This horizontal scaling operates at a different level than instance scaling.

Start with appropriate initial sizing, then grow as needed. Over-engineering for scale you don't need wastes resources. Build for horizontal scaling architecturally, but don't deploy excessive initial capacity.

Making the Right Choice

Assess your application's current architecture. Can it scale horizontally without major changes? If not, factor in refactoring costs when comparing approaches.

Consider your growth trajectory. Modest, predictable growth may suit vertical scaling. Rapid or uncertain growth favors horizontal scaling's flexibility.

Evaluate your operational maturity. Horizontal scaling requires more operational sophistication. Ensure your team can manage distributed deployments.

Examine your traffic patterns. Steady traffic reduces auto-scaling benefits. Highly variable traffic makes elastic horizontal scaling valuable.

Plan for failure scenarios. Single-server architectures have single points of failure. Determine acceptable risk levels.

Review cloud provider options. Instance sizes and pricing vary. Understanding available options informs realistic planning.

Start simple, grow as needed. Many successful SaaS applications started on single servers. Don't over-engineer initially, but architect for future horizontal scaling.

| Factor | Vertical Scaling | Horizontal Scaling |

|---|---|---|

| Implementation complexity | Low | Higher |

| Maximum capacity | Limited by hardware | Theoretically unlimited |

| High availability | Challenging | Natural fit |

| Cost at scale | Higher per unit | More efficient |

| Operational complexity | Lower | Higher |

| Application changes | Minimal | Often required |

Conclusion

The choice between vertical and horizontal scaling isn't binary it's a spectrum that shifts as your SaaS matures. Early-stage applications rightly start with vertical scaling: it's simple, cost-effective at low volumes, and gets you to market faster. But building for horizontal scaling from day one stateless services, shared storage, health checks creates architectural runway for when growth accelerates.

As you scale, the hybrid model becomes inevitable: stateless application tiers horizontally auto-scale to meet variable demand; databases vertically scale their primary instance while adding read replicas horizontally; caching layers cluster; and background workers scale independently.

The cloud-native architecture that enables horizontal scaling also delivers high availability, zero-downtime deployments, and geographic distribution. Invest in the architectural foundations early, deploy with the simplest capacity model that meets current needs, and evolve your scaling strategy as growth demands. The cost of refactoring for horizontal scaling under pressure far exceeds the cost of designing for it from the start.

Frequently Asked Questions

At what point should I move from vertical to horizontal scaling?

When you hit a resource limit or cost inefficiency. Specific triggers:

- You reach the largest instance size available (e.g., AWS has 128 vCPU, 2TB memory instances but they're extremely expensive per unit).

- Single-server cost becomes disproportionate. At some point, two smaller instances cost less than one larger instance for equivalent capacity.

- Availability requirements demand redundancy. If downtime from a single server failure is unacceptable, you need horizontal scaling for failover.

- Traffic becomes highly variable. Auto-scaling horizontal clusters can save 40-60% compared to running a large instance 24/7.

Start architecting for horizontal scaling before you hit the wall refactoring under growth pressure is painful.

How do I make my application stateless for horizontal scaling?

Move session state out of local memory. Key patterns:

- Session data: Store in Redis or Memcached (shared cache).

- Uploaded files: Use object storage (S3, Cloud Storage) not local disk.

- In-memory caches: Use distributed cache (Redis Cluster), not local server memory.

- Configuration: Externalize to environment variables or config services.

- Logging: Send to centralized logging, don't write to local disk.

- Authentication tokens: Validate tokens (JWT) without server-side session storage.

If you can restart an instance without losing data or user sessions, you're stateless enough to scale horizontally.

What's the cost trade-off between vertical and horizontal scaling?

Vertical scaling cost curve is exponential: Doubling instance size often increases price by 2.5-3x, not 2x. Example: AWS m5.large ($0.096/hr) to m5.xlarge ($0.192/hr) is linear; but m5.24xlarge ($4.608/hr) to m5.metal ($5.568/hr) shows diminishing returns.

Horizontal scaling with auto-scaling matches capacity to demand pay for 100% capacity only during peak, less during off-peak. If your traffic has 2x peaks vs valleys, auto-scaling can save 30-50% compared to running peak capacity 24/7.

Break-even analysis: For consistent 24/7 load, vertical scaling with reserved instances is often cheaper. For variable or growing load, horizontal with auto-scaling wins. Use cloud provider cost calculators to model your specific workload.

Summarize this post with:

Ready to put this into production?

Our engineers have deployed these architectures across 100+ client engagements — from AWS migrations to Kubernetes clusters to AI infrastructure. We turn complex cloud challenges into measurable outcomes.