Comparing Optimization Techniques Across PHP, Node.js, and Python

Compare optimization techniques across PHP, Node.js, and Python. Learn runtime architectures, concurrency models, memory management, and caching strategies to maximize performance in your stack.

TL;DR

- PHP: Process-per-request model with shared-nothing architecture. Each request runs in isolation—memory leaks don't accumulate, but per-request overhead exists. Optimize with OPcache for bytecode caching, PHP-FPM process tuning, and external caches (Redis/Memcached). PHP 8.x JIT improves CPU-bound performance.

- Node.js: Event-driven, single-threaded with non-blocking I/O. Excels at I/O-bound and real-time apps but blocks on CPU-heavy tasks. Optimize with worker threads for CPU work, cluster mode for multi-core, and careful memory management (V8 heap limits, leak detection).

- Python: GIL-limited threading but multiple concurrency options. Use asyncio for I/O concurrency, multiprocessing for CPU parallelism. Memory management relies on reference counting + garbage collection. Optimize with connection pooling, lru_cache for functions, and async frameworks (FastAPI, Sanic) for I/O performance.

- Choose by workload: PHP for traditional web apps (mature ecosystem), Node.js for I/O-heavy/real-time (chat, streaming), Python for data/ML workloads (NumPy, Pandas) or rapid development with async capabilities.

PHP, Node.js, and Python power most SaaS applications today. Each language has distinct runtime characteristics, memory models, and concurrency approaches that affect optimization strategies.

Understanding these differences helps teams choose the right language for their use case and optimize applications effectively regardless of the stack they've chosen.

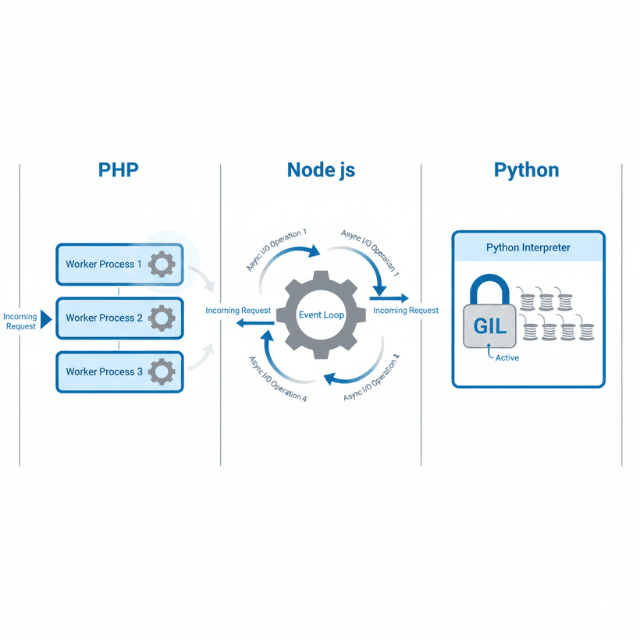

Runtime Architecture Differences

PHP traditionally follows a shared-nothing architecture. Each request spawns a fresh process, loads the application, handles the request, and terminates. This isolation prevents memory leaks across requests but creates per-request overhead.

PHP-FPM (FastCGI Process Manager) maintains a pool of worker processes. Workers handle multiple requests sequentially, reducing process spawning overhead. OPcache stores compiled bytecode, eliminating parsing and compilation on each request.

// PHP-FPM pool configuration

[www]

pm = dynamic

pm.max_children = 50

pm.start_servers = 5

pm.min_spare_servers = 5

pm.max_spare_servers = 35

Node.js uses an event-driven, single-threaded architecture. The event loop handles I/O asynchronously while JavaScript execution happens on one thread. This model excels at I/O-bound workloads but requires careful handling of CPU-intensive tasks.

// Node.js async I/O handling

const results = await Promise.all([

fetchFromDatabase(query1),

fetchFromAPI(endpoint),

readFromCache(key)

]);

Python's default interpreter (CPython) uses the Global Interpreter Lock (GIL). Only one thread executes Python bytecode at a time. This simplifies memory management but limits true parallelism for CPU-bound work.

ASGI servers like Uvicorn enable async Python applications. Async/await syntax provides concurrency similar to Node.js for I/O-bound workloads.

Concurrency Models Compared

PHP handles concurrency at the process level. Each PHP-FPM worker handles one request at a time. More workers enable more concurrent requests, limited by available memory.

PHP 8.1 introduced Fibers for cooperative multitasking. Libraries like ReactPHP and Amp provide async capabilities. These approaches remain less common than traditional synchronous PHP.

Node.js handles thousands of concurrent connections on a single process. The event loop multiplexes I/O without blocking. CPU-bound operations block the event loop and must be offloaded.

// Worker threads for CPU-intensive tasks

const { Worker, isMainThread, parentPort } = require('worker_threads');

if (isMainThread) {

const worker = new Worker(__filename);

worker.on('message', (result) => console.log(result));

worker.postMessage({ data: complexData });

} else {

parentPort.on('message', (msg) => {

const result = expensiveComputation(msg.data);

parentPort.postMessage(result);

});

}

Cluster mode runs multiple Node.js processes. The master process distributes connections across workers. This utilizes multiple CPU cores effectively.

Python offers multiple concurrency options. Threading provides concurrency for I/O-bound tasks despite the GIL. Multiprocessing enables true parallelism by spawning separate processes. Asyncio provides cooperative multitasking.

# Python asyncio for I/O concurrency

import asyncio

import aiohttp

async def fetch_all(urls):

async with aiohttp.ClientSession() as session:

tasks = [fetch_url(session, url) for url in urls]

return await asyncio.gather(*tasks)

# Multiprocessing for CPU parallelism

from multiprocessing import Pool

def process_items(items):

with Pool(processes=4) as pool:

return pool.map(expensive_computation, items)

Memory Management Strategies

PHP releases memory after each request in traditional deployments. This prevents memory leaks from accumulating but limits caching opportunities. Long-running PHP applications (Swoole, RoadRunner) require careful memory management.

OPcache caches compiled PHP bytecode in shared memory. This eliminates compilation overhead on subsequent requests. Preloading in PHP 7.4+ keeps specified classes in memory permanently.

; php.ini OPcache configuration

opcache.memory_consumption=256

opcache.interned_strings_buffer=16

opcache.max_accelerated_files=10000

opcache.validate_timestamps=0

opcache.preload=/app/preload.php

Node.js maintains memory across requests, enabling in-process caching. V8's garbage collector manages memory automatically. Memory leaks accumulate if references aren't released properly.

Heap size limits affect Node.js applications. The default limit (around 1.4GB) can be increased with --max-old-space-size. Large heaps increase garbage collection pause times.

# Increase Node.js heap size

node --max-old-space-size=4096 app.js

Python uses reference counting with cycle detection. Objects are freed when reference counts reach zero. The garbage collector handles reference cycles.

Memory profiling identifies leaks and excessive allocation. Libraries like memory_profiler and tracemalloc help diagnose memory issues.

import tracemalloc

tracemalloc.start()

# ... run code ...

snapshot = tracemalloc.take_snapshot()

top_stats = snapshot.statistics('lineno')

for stat in top_stats[:10]:

print(stat)

Caching Approaches

PHP applications typically cache in external stores. Redis and Memcached store session data, query results, and computed values. APCu provides local in-memory caching per worker.

// APCu for local caching

$key = 'expensive_result';

$result = apcu_fetch($key, $success);

if (!$success) {

$result = computeExpensiveResult();

apcu_store($key, $result, 3600);

}

Node.js applications can cache in-memory across requests. Simple objects or libraries like node-cache store frequently accessed data. Memory limits constrain cache size.

const NodeCache = require('node-cache');

const cache = new NodeCache({ stdTTL: 3600, checkperiod: 600 });

async function getUserWithCache(userId) {

const cached = cache.get(`user:${userId}`);

if (cached) return cached;

const user = await db.users.findById(userId);

cache.set(`user:${userId}`, user);

return user;

}

Python frameworks provide caching integration. Django's cache framework supports multiple backends. Flask-Caching adds similar capabilities. Local caching with lru_cache decorators optimizes function calls.

from functools import lru_cache

@lru_cache(maxsize=1000)

def compute_discount(customer_tier, order_total):

# Cached per unique argument combination

return calculate_complex_discount(customer_tier, order_total)

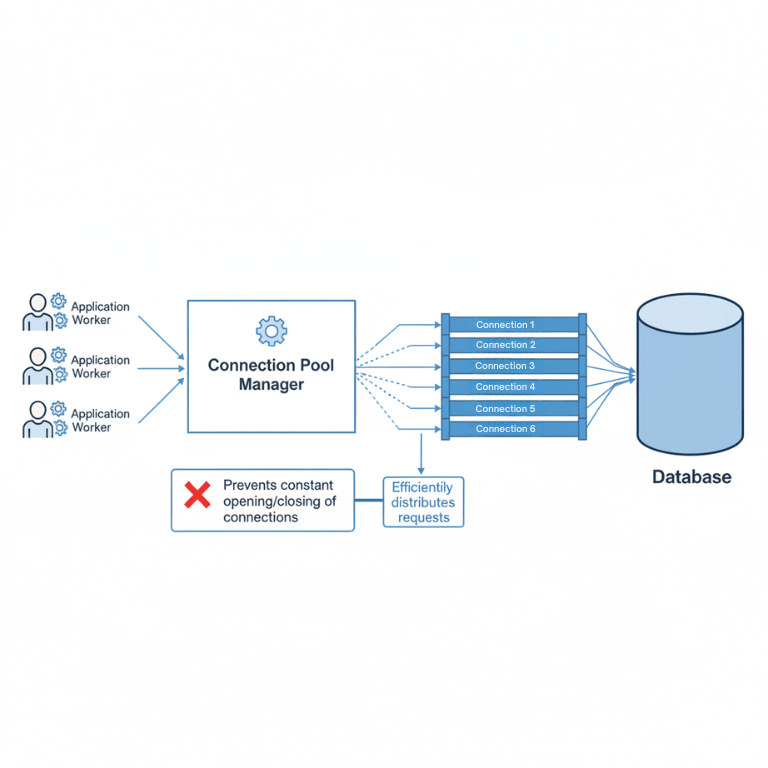

Database Optimization by Language

PHP database access commonly uses PDO or ORMs like Eloquent and Doctrine. Connection pooling happens at the PHP-FPM level or through external poolers like PgBouncer.

Prepared statements prevent SQL injection and improve performance. Query builders generate optimized SQL while maintaining readability.

// Eloquent eager loading prevents N+1 queries

$orders = Order::with(['customer', 'items.product'])

->where('status', 'pending')

->get();

Node.js database drivers support connection pooling natively. Libraries like pg-pool and mysql2 manage pools efficiently. ORMs like Prisma and Sequelize abstract database operations.

// Connection pool configuration

const pool = new Pool({

max: 20,

idleTimeoutMillis: 30000,

connectionTimeoutMillis: 2000,

});

// Prisma query optimization

const orders = await prisma.order.findMany({

where: { status: 'pending' },

include: {

customer: true,

items: { include: { product: true } }

}

});

Python database access through SQLAlchemy, Django ORM, or raw drivers requires similar pooling consideration. SQLAlchemy's connection pool handles this transparently.

from sqlalchemy import create_engine

engine = create_engine(

DATABASE_URL,

pool_size=20,

max_overflow=10,

pool_timeout=30,

pool_recycle=1800

)

Profiling and Debugging Tools

PHP profiling tools include Xdebug, Blackfire, and Tideways. Xdebug provides detailed execution traces. Blackfire offers production-safe profiling with visualization.

; Xdebug profiling configuration

xdebug.mode=profile

xdebug.output_dir=/tmp/profiles

xdebug.profiler_output_name=cachegrind.out.%p

Node.js includes built-in profiling capabilities. The --inspect flag enables Chrome DevTools debugging. The --prof flag generates V8 profiler output.

# Generate V8 profile

node --prof app.js

node --prof-process isolate-*.log > processed.txt

Clinic.js provides Node.js-specific diagnostics. Clinic Doctor identifies common issues. Clinic Flame generates flame graphs. Clinic Bubbleprof visualizes async operations.

Python profiling uses cProfile, py-spy, or Pyroscope. cProfile provides comprehensive profiling data. py-spy samples running processes without instrumentation.

import cProfile

import pstats

profiler = cProfile.Profile()

profiler.enable()

# ... code to profile ...

profiler.disable()

stats = pstats.Stats(profiler).sort_stats('cumulative')

stats.print_stats(20)

Choosing the Right Stack

PHP excels at traditional web applications with request-response patterns. The vast ecosystem includes mature frameworks like Laravel and Symfony. Hosting is widely available and inexpensive.

PHP 8.x performance rivals other languages for typical web workloads. JIT compilation improves CPU-intensive operations. The language continues evolving with modern features.

Node.js suits I/O-heavy applications and real-time features. WebSocket handling and streaming feel natural. JavaScript skills transfer between frontend and backend.

Microservice architectures benefit from Node.js's lightweight startup. Container deployments start quickly. However, CPU-intensive tasks require careful architecture.

Python excels at data processing, machine learning integration, and scientific computing. Libraries like NumPy, Pandas, and scikit-learn have no equivalent in other languages.

Web frameworks like Django and FastAPI provide solid foundations. Django's batteries-included approach accelerates development. FastAPI's async capabilities match Node.js performance for I/O workloads.

| Aspect | PHP | Node.js | Python |

|---|---|---|---|

| Concurrency | Process-based | Event loop | GIL + async |

| Memory model | Per-request | Persistent | Persistent |

| CPU-intensive | JIT helps | Use workers | Multiprocessing |

| Real-time | Limited | Excellent | Good with async |

| Data science | Limited | Limited | Excellent |

Conclusion

No single language wins across all optimization dimensions—each has architectural trade-offs that make it ideal for specific workloads.

PHP optimization process isolation and OPcache deliver predictable performance for traditional request-response apps. Node.js's event loop and non-blocking I/O crush real-time and I/O-bound scenarios but require offloading CPU work.

Python's asyncio and multiprocessing provide flexibility for both I/O and CPU tasks, with unmatched data science integration.

The key is matching your application's dominant workload pattern to the language's inherent strengths, then applying the specific optimization techniques detailed above.

With modern versions—PHP 8.x JIT, Node.js worker threads, Python asyncio—all three stacks can deliver excellent performance when optimized appropriately.

FAQs

1. Which language has the best performance for API servers?

Node.js typically leads for I/O-heavy APIs due to its non-blocking event loop handling thousands of concurrent connections with low overhead.

Python (with FastAPI/ASGI) approaches Node.js performance for async I/O but has higher baseline latency.

PHP (with Laravel/Symfony) performs well for standard CRUD APIs but requires more workers for high concurrency.

Modern PHP 8.x and Python 3.12+ have narrowed the gap significantly.

2. How do I handle CPU-intensive tasks in each language?

- PHP: Use JIT compilation (PHP 8.0+) or offload to queues (Laravel Horizon) with dedicated workers.

- Node.js: Offload to worker threads (separate CPU cores) or spin up microservices in other languages for heavy computation.

- Python: Use multiprocessing (separate processes bypassing GIL) or leverage C-extensions like NumPy that release the GIL during computation.

3. What's the most common performance mistake in each stack?

- PHP: Not enabling OPcache, causing repeated compilation overhead on every request.

- Node.js: Blocking the event loop with synchronous CPU-heavy code (e.g., JSON parsing of huge objects) without using worker threads.

- Python: Using threads for CPU-bound work (GIL limits parallelism) instead of multiprocessing, or not using connection pooling with databases.

Summarize this post with:

Ready to put this into production?

Our engineers have deployed these architectures across 100+ client engagements — from AWS migrations to Kubernetes clusters to AI infrastructure. We turn complex cloud challenges into measurable outcomes.