Building Applications for Kubernetes

Learn how to build Kubernetes native applications using operators, CRDs, service meshes, and cloud-native workflows for scalable, resilient systems.

Introduction

Kubernetes native development builds applications specifically for Kubernetes platforms, embedding container orchestration into development workflows from inception. This approach moves beyond deploying existing applications to Kubernetes, instead architecting cloud native systems that leverage Kubernetes operators, custom resources, and platform capabilities. Teams adopting Kubernetes native patterns achieve faster iteration cycles and more resilient production systems.

Understanding Kubernetes-Native Concepts

Kubernetes native applications embrace the platform's declarative API model, controller pattern, and distributed architecture. Unlike applications simply containerized and deployed to Kubernetes, truly native apps leverage CustomResourceDefinitions, implement operators managing lifecycle, and use platform features for resilience.

The controller pattern forms the foundation. Controllers watch resources, compare desired state against actual state, and take action to reconcile differences. This continuous loop enables self-healing and automated operations. Applications can implement custom controllers extending Kubernetes to manage domain-specific resources.

CustomResourceDefinitions extend the Kubernetes API with new resource types representing application concepts. A CRD might define a Database resource type, enabling declarative database management. Combined with custom controllers, CRDs provide powerful abstraction layers hiding infrastructure complexity.

Immutable infrastructure treats pods as ephemeral units replaced rather than updated. Applications must externalize state, handle termination gracefully, and start quickly. This stateless design enables rolling updates, horizontal scaling, and rapid failure recovery.

Developing Kubernetes native applications requires shifting mindset from long-lived servers to ephemeral containers, from imperative operations to declarative configuration, from manual intervention to automated controllers, and from avoiding failures to embracing failure as normal, aligning architecture decisions with Kubernetes cost optimization and resource efficiency best practices.

Kubernetes Operators

Kubernetes operators encode operational knowledge into software that manages complex applications. Operators combine CRDs defining application resources with controllers implementing operational logic. They automate deployment, scaling, backup, upgrade, and failure recovery tasks previously requiring human expertise.

Operator capabilities span multiple maturity levels. Basic Install operators deploy applications. Seamless Upgrades operators update versions automatically. Full Lifecycle operators handle backups and failure recovery. Deep Insights operators provide metrics and tuning. Auto Pilot operators automatically scale and heal without human intervention.

The Operator Framework provides tools building and distributing operators. Operator SDK generates boilerplate code, local testing environments, and packaging tools. OperatorHub catalogs hundreds of pre-built operators for databases, message queues, monitoring tools, and infrastructure components.

Writing custom operators enables teams to codify operational procedures specific to their applications. An operator might automatically scale based on business metrics, execute complex upgrade sequences, or implement disaster recovery procedures. This transforms institutional knowledge from documentation into executable code that consistently applies best practices.

Operators managing stateful applications particularly provide value. Database operators handle replication, backup schedules, failover procedures, and version upgrades. These complex operations become declarative resource updates rather than manual procedures.

Modern Development Workflows for Kubernetes

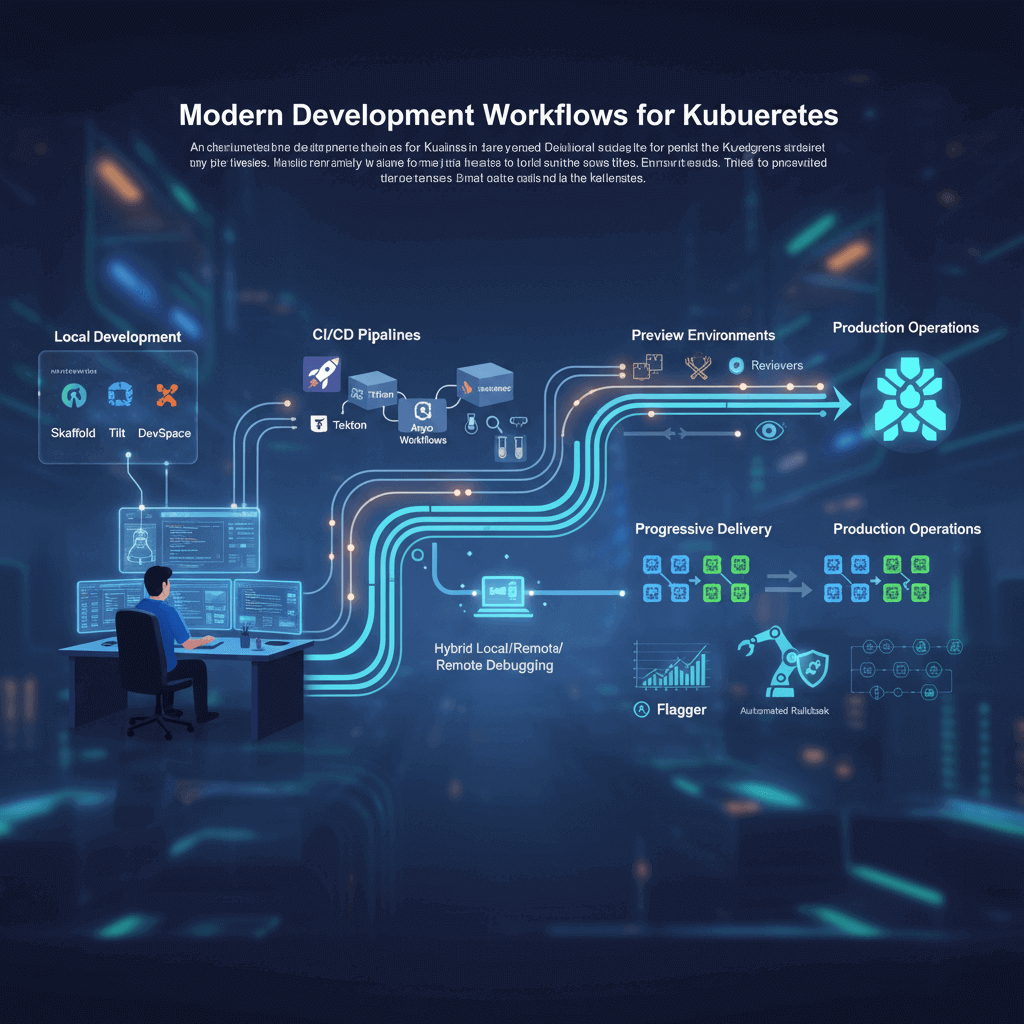

Cloud native development workflows integrate Kubernetes into every development phase, from local development through CI/CD to production operations. Modern workflows emphasize fast feedback loops, environment parity, and automated quality gates.

Local development using tools like Skaffold, Tilt, and DevSpace synchronizes local code changes to Kubernetes clusters automatically. Developers edit code and immediately see changes reflected in running containers without manual rebuild-deploy cycles. This rapid iteration matches traditional development while maintaining Kubernetes environment fidelity.

Telepresence enables hybrid local/remote development. Services run locally with full debugger support while connecting to remote Kubernetes clusters. This provides production-like environments for services under development while maintaining local development ergonomics.

CI/CD pipelines tailored for Kubernetes automate building container images, scanning for vulnerabilities, running tests in Kubernetes environments, and deploying through progressive delivery. Tools like Tekton, Argo Workflows, and Jenkins X provide Kubernetes native pipeline execution.

Progressive delivery patterns including canary releases and blue-green deployments minimize deployment risk. Flagger automates progressive delivery, gradually shifting traffic while monitoring metrics and automatically rolling back on errors. This transforms deployments from risky events into routine operations.

Preview environments automatically create isolated Kubernetes environments for each pull request. Developers and reviewers test changes in environments matching production before merging. This enables earlier feedback and catches integration issues before they reach shared environments.

Advanced Networking with Service Meshes

Service meshes provide sophisticated networking capabilities for microservices without requiring application code changes. The mesh handles service-to-service communication through sidecar proxies attached to each pod, intercepting network traffic and implementing traffic management, security, and observability features.

Istio provides wide service mesh capabilities (see the official Istio documentation). Traffic management features include intelligent load balancing, circuit breaking, retries, timeouts, and fault injection for chaos engineering. Security features implement mutual TLS automatically, provide fine-grained access control policies, and manage certificate rotation. Observability capabilities automatically collect metrics, generate distributed traces, and provide service graphs.

Linkerd emphasizes simplicity and performance with a lighter architecture than Istio. It provides core mesh functionality with minimal configuration and lower resource overhead. Linkerd appeals to teams prioritizing operational simplicity and performance over Istio's extensive feature set.

Service mesh adoption follows a maturity curve. Teams typically start with simple service-to-service traffic management, then add mutual TLS for security, implement sophisticated routing for canary deployments, and finally leverage advanced features like circuit breaking and fault injection.

The service mesh control plane configures data plane proxies, provides management APIs, and collects telemetry. The data plane comprises lightweight proxies handling actual traffic. This separation enables mesh-wide policy enforcement and observability without application awareness.

Observability for Kubernetes Applications

Kubernetes native applications require observability strategies designed for dynamic, distributed environments. Traditional monitoring assuming static infrastructure fails when pods are constantly created, destroyed, and rescheduled.

Prometheus integrates natively with Kubernetes using service discovery to automatically find and scrape metrics from pods. The Prometheus Operator simplifies deployment and configuration, managing Prometheus instances, alerting rules, and service monitors through Kubernetes resources.

Distributed tracing reveals request paths through microservices deployed across Kubernetes clusters. OpenTelemetry provides standardized instrumentation, while Jaeger and Tempo provide backend storage and visualization. Integrating with service meshes enables automatic trace generation without code instrumentation.

Logging strategies aggregate logs from ephemeral containers before pods are deleted. Fluentd and Fluent Bit deployed as DaemonSets collect logs from all nodes, enriching them with Kubernetes metadata like namespace, pod name, and labels. This context enables efficient filtering and correlation.

Kubernetes native observability emphasizes resource labels and annotations as organizing principles. Consistent labeling enables aggregating metrics across services, filtering logs by deployment version, and grouping traces by customer or feature flag. Establishing labeling conventions is foundational to effective observability.

Kubernetes Security Best Practices

Kubernetes native security implements defense in depth across multiple layers. Pod Security Standards enforce baseline security requirements, network policies restrict communication, and RBAC controls access to Kubernetes APIs.

Pod Security Standards define three policy levels. Privileged allows unrestricted access, Baseline prevents known privilege escalations, and Restricted enforces hardening best practices. Namespaces are configured with appropriate policy levels based on workload sensitivity.

Network policies implement microsegmentation at pod level. By default, Kubernetes allows all pod-to-pod communication. Network policies explicitly allow traffic matching specified rules while denying everything else. This zero-trust approach limits blast radius from compromised workloads.

RBAC controls who can perform operations on Kubernetes resources. Roles define permissions, while RoleBindings grant roles to users or service accounts. Following least-privilege principles, workloads receive only permissions necessary for their function.

Service accounts provide identities for pods accessing Kubernetes APIs. Applications should use dedicated service accounts with appropriate RBAC permissions rather than default service accounts with excessive privileges.

Runtime security tools like Falco detect anomalous behavior in running containers, identifying suspicious processes, unexpected network connections, and file system modifications. These tools provide defense against zero-day exploits and insider threats.

Conclusion

Kubernetes native development represents a paradigm shift from traditional application development. By building applications specifically for Kubernetes platforms, teams achieve unprecedented operational efficiency through operators automating complex workflows, service meshes providing infrastructure capabilities transparently, and cloud native patterns enabling resilient distributed systems.

Success requires more than technical mastery. Cultural transformation toward declarative thinking, automated operations, and continuous delivery is equally important. Development teams must embrace Kubernetes concepts like controllers, CRDs, and reconciliation loops as first-class architectural patterns.

The ecosystem continues maturing rapidly. Operators are standardizing on frameworks like Operator SDK and Kubebuilder. Service meshes are consolidating around Istio and Linkerd. Development tools like Skaffold and Tilt are smoothing local development experience.

Organizations achieving Kubernetes native maturity gain competitive advantages through faster feature delivery, improved reliability, and reduced operational burden. Start with containerization and basic Kubernetes deployments, progressively adopting operators, service meshes, and advanced patterns as expertise grows. The journey to Kubernetes native development is transformational, but the operational benefits justify the investment.